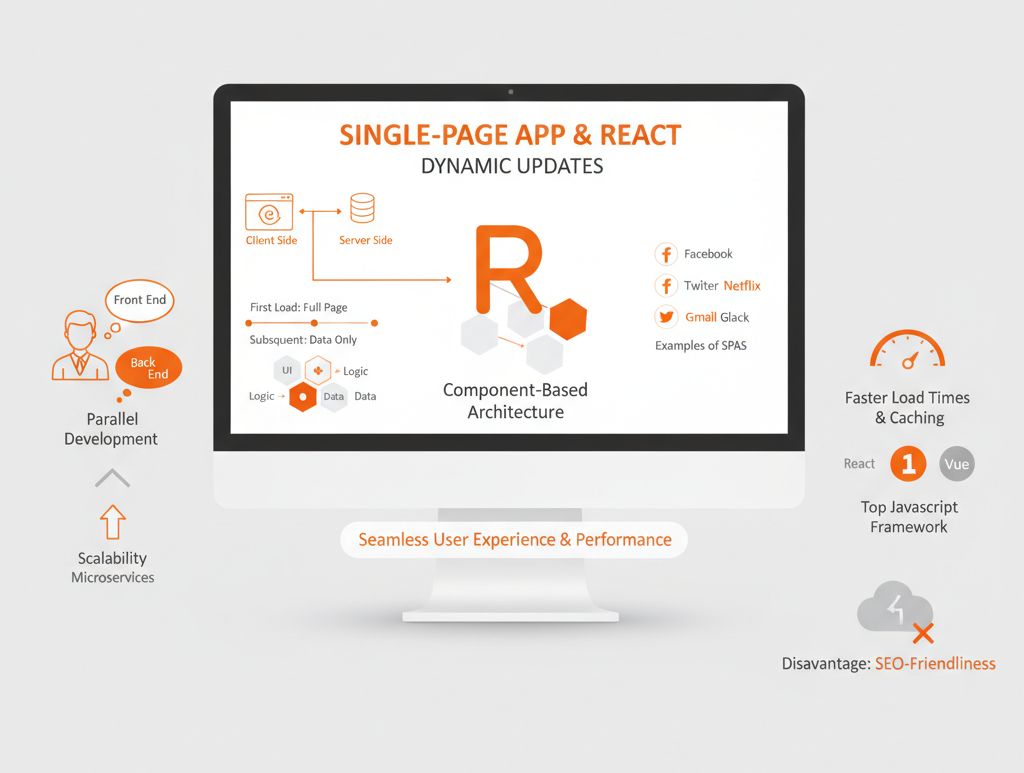

React-driven single-page applications (SPAs) are gaining momentum. Used by tech giants like Facebook, Twitter, and Google, React allows for building fast, responsive, and animation-rich websites and web applications with a smooth user experience.

However, products created with React (or any other modern JavaScript framework like Angular, Vue, or Svelte) have limited capabilities for search engine optimization. This becomes a problem if a website mostly acquires customers through website content and search marketing. Taking into account that 93% of customers’ online experience start with search engines, React-based SPA’s put businesses’ online strategies at risk.

In this article, we’ll explore ready-made solutions for React SEO optimization that help you achieve visibility in search engines while preserving all the benefits of SPAs and React programming.

What’s a Single-Page App, and Why React?

A single-page application (SPA) is a web application whose content is served in a single HTML page. This page is dynamically updated, but it doesn’t fully reload each time a user interacts with it. Instead of sending a new request to the server for each interaction and then receiving a whole new page with new content, the client side of a single-page web app only requests and loads data that needs to be updated.

Facebook, Twitter, Netflix, Trello, Gmail, and Slack are all examples of single-page apps.

From the developer’s perspective, SPAs keep the presentation layer (the front end) and the data layer (the back end) separate, so two teams can develop these parts in parallel. Also, with an SPA, it’s easier to scale and use a microservices architecture than it is with a multi-page app.

What’s Wrong With Optimizing React Apps for SEO?

Typically, single-page apps load pages on the client side: at first, the page is an empty container; then JavaScript pushes content to this container. The simplest HTML document for a React app might look as follows:

<html lang="en">

<head>

<title>React App</title>

</head>

<body>

<noscript>You need to enable JavaScript to run this app.</noscript>

<div id="root"></div>

<script src="/static/js/bundle.js"></script>

</body>

</html>As you can see, there’s nothing except the <div> tag and an external script. Single-page applications need a browser to run a script, and only after the script is run will the content be dynamically loaded to the web page. So when a crawler visits the website, it sees an empty page without content. Hence, the page cannot be indexed.

Although Google’s bots have been able to inspect JavaScript and CSS for years, even in 2025, there are still significant problems with SEO optimization for web pages. These challenges continue to affect how effectively sites built with frameworks like React are indexed and ranked.

Long delays

If the content on a page updates frequently, crawlers should regularly revisit the page. This can cause problems, since reindexing may only be done a week later after the content is updated.

This happens because the Google Web Rendering Service (WRS) enters the game. After a bot has downloaded HTML, CSS, and JavaScript files, the WRS runs the JavaScript code, fetches data from APIs, and only after that sends the data to Google’s servers.

Limited crawling budget

The crawl budget is the maximum number of pages on your website that a crawler can process in a certain period of time. Once that time is up, the bot leaves the site no matter how many pages it’s downloaded (whether that’s 26, 3, or 0). If each page takes too long to load because of running scripts, the bot will simply leave your website before indexing it.

Talking about other search engines, Yahoo’s and Bing’s crawlers will still see an empty page instead of dynamically loaded content. So getting your React-based SPA to rank at the top on these search engines is a will-o’-the-wisp.

You should think of how to solve this problem on the stage of designing app architecture.

How To Make React Websites and Apps SEO-Friendly

Achieving effective React SEO requires following proven best practices tailored to overcome the challenges of client-side rendering. Following these approaches helps maintain great user experiences while boosting your React app’s discoverability in search results.

Isomorphic React apps

In plain English, an isomorphic JavaScript application (or in our case, an isomorphic React application) can run on both the client side and the server side.

Thanks to isomorphic JavaScript, you can run the React app and capture the rendered HTML file that’s normally rendered by the browser. This HTML file can then be served to everyone who requests the site (including Googlebot).

On the client side, the app can use this HTML as a base and continue operating on it in the browser as if it had been rendered by the browser. When needed, additional data is added using JavaScript, as an isomorphic app is still dynamic.

An isomorphic app defines whether the client is able to run scripts or not. When JavaScript is turned off, the code is rendered on the server, so a browser or bot gets all meta tags and content in HTML and CSS.

When JavaScript is on, only the first page is rendered on the server, so the browser gets HTML, CSS, and JavaScript files. Then JavaScript starts running and the rest of the content is loaded dynamically. Thanks to this, the first screen is displayed faster, the app is compatible with older browsers, and user interactions are smoother in contrast to when websites are rendered on the client side.

Building an isomorphic app can be really time-consuming. Luckily, there are frameworks that facilitate this process. The two most popular solutions for SEO are Next.js and Gatsby.

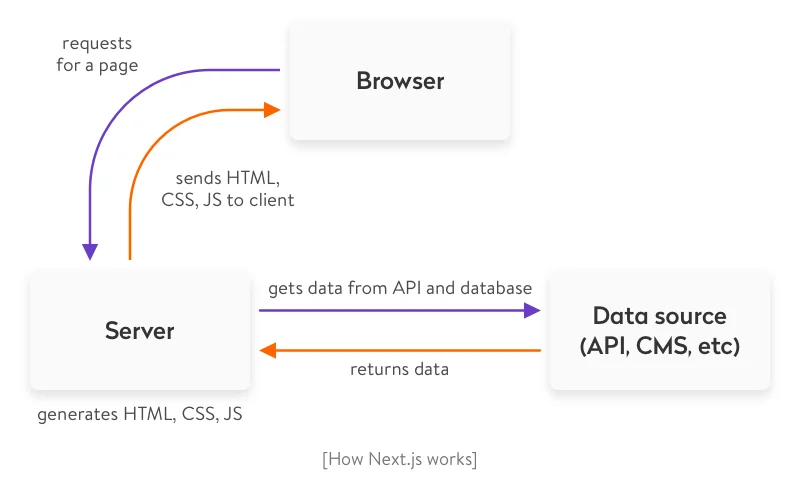

- Next.js is a framework that helps you create React apps that are generated on the server side quickly and without hassle. It also allows for automatic code splitting and hot code reloading. Next.js can do full-fledged server-side rendering, meaning HTML is generated for each request right when the request is made.

- Gatsby is a free open-source compiler that allows developers to make fast and powerful websites. Gatsby doesn’t offer full-fledged server-side rendering. Instead, it generates a static website beforehand and stores generated HTML files in the cloud or on the hosting service. Let’s take a closer look at their approaches.

Here’s a concise comparison of GatsbyJS and Next.js, highlighting their rendering approaches, performance, and best use cases:

|

Criteria |

GatsbyJS |

Next.js |

|

Rendering Type |

Static Site Generation (SSG) — all HTML pages are generated during the build phase |

Server-Side Rendering (SSR) — pages are generated on the server for each user request |

|

SEO |

SEO challenges are solved through pre-generated HTML pages |

Also SEO-friendly since HTML is generated on the server per request |

|

Data Updates |

Data is fetched only during the build process; new content appears only after rebuilding |

Data is updated dynamically on every client request |

|

Performance |

Very high loading speed because pages are static |

High speed, though slightly lower due to runtime page generation |

|

Best Use Case |

Ideal for sites with infrequent updates — blogs, portfolios, landing pages |

Ideal for dynamic apps — forums, social networks, content-heavy platforms |

|

Hosting |

Can be hosted on any static hosting or in the cloud |

Requires a Node.js server to handle runtime requests |

|

Data Fetching |

During the build phase |

On each user request |

|

Main Advantage |

Speed and deployment simplicity |

Dynamic content and real-time updates |

Server-side rendering with Next.js

The Next.js rendering algorithm looks as follows:

- The Next.js server, running on Node.js, receives a request and matches it with a certain page (a React component) using a URL address.

- The page can request data from an API or database, and the server will wait for this data.

- The Next.js app generates HTML and CSS based on the received data and existing React components.

- The server sends a response with HTML, CSS, and JavaScript.

Making website SEO Friendly with GatsbyJS

The process of optimizing React applications is divided into two phases: generating a static website during the build and processing requests during runtime.

The build time process looks as follows:

- Gatsby’s bundling tool receives data from an API, CMS, and file system.

- During deployment or setting up a CI/CD pipeline, the tool generates static HTML and CSS on the basis of data and React components.

- After compilation, the tool creates an about folder with an index.html file. The website consists of only static files, which can be hosted on any hosting service or in the cloud.

Request processing during runtime happens like this:

- Gatsby instantly sends HTML, CSS, and JavaScript files to the requested page, since they already were rendered during compilation.

- After JavaScript is loaded to the browser, the website starts working like a typical React app. You can dynamically request data that isn’t important for SEO and work with the website just like you work with a regular single-page React app.

Creating an isomorphic app is considered the most reliable way to make React SEO-compatible, but it’s not the only option.

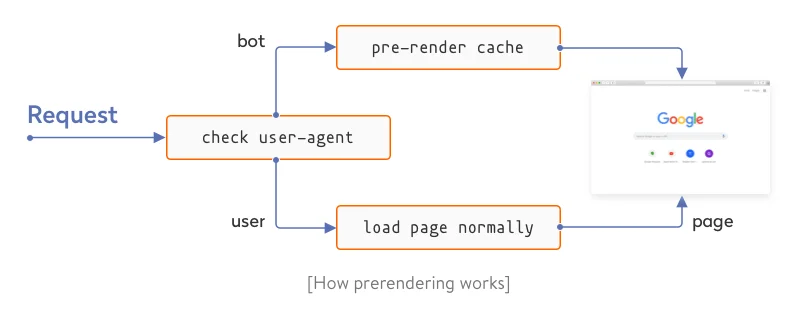

Prerendering

The idea of prerendering is to preload all HTML elements on the page and cache all SPA pages on the server with the help of Headless Chrome. One popular way to do this is using a prerendering service like prerender.io. A prerendering service intercepts all requests to your website and, with the help of a user-agent, defines whether a bot or a user is viewing the website.

If the viewer is a bot, it gets the cached HTML version of the page. If it’s a user, the single-page app loads normally.

Prerendering has a lighter server payload compared to server-side rendering. But on the other hand, most prerendering services are paid and work poorly with dynamically changing content.

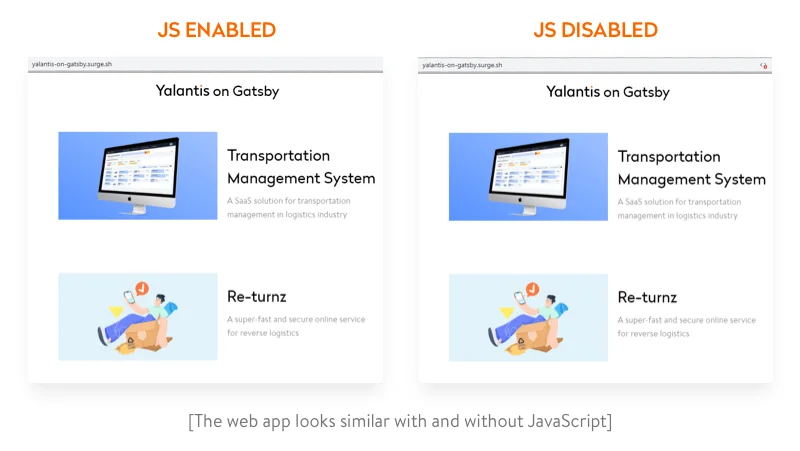

Using Gatsby: A Demo Project

To demonstrate how isomorphic React apps work, we’ve created a simple app for the Yalantis blog using Gatsby. We’ve uploaded the source code of the app to this repository on GitHub.

Gatsby renders only the starting page. Then the website works as a single-page app. To see how this isomorphic app works, just turn off JavaScript in your browser’s devtools. If you use Chrome, here are instructions on how to do that. If you refresh the page, the website should work just like it does with JavaScript turned on.

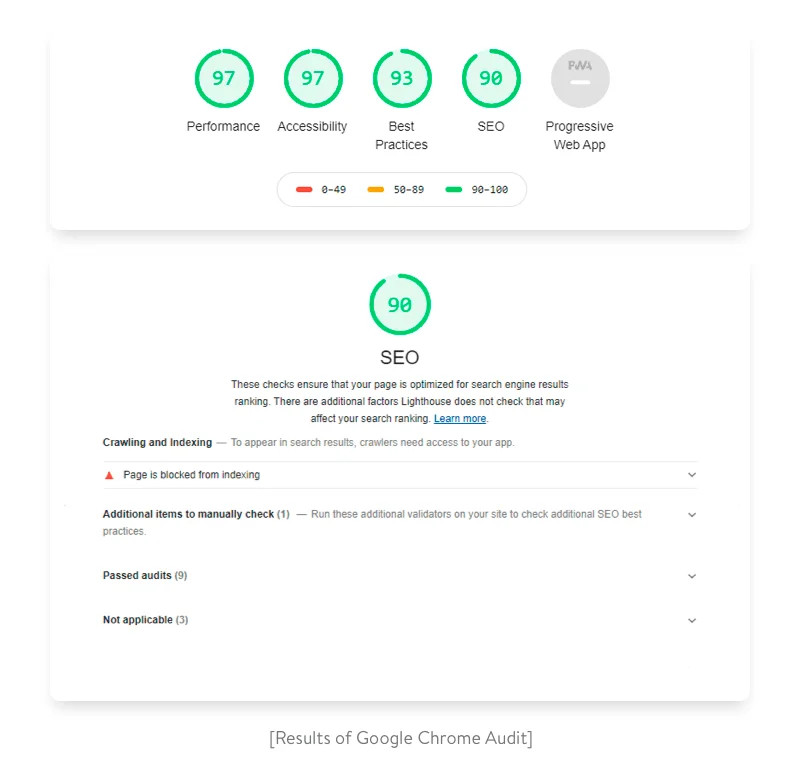

Now let’s check the audit result using Lighthouse. Its performance is close to 100, while SEO for Gatsby.js applications is measured as high as 90.

If this website were on the production server, all indicators would be approximately 100.

Our Experience in Building React SEO-Optimized Apps

At Yalantis, one of our projects was focused on developing a web app for a Spanish real estate services provider. Since the market is highly competitive, we put a lot of effort into SEO. We consulted a dedicated company to come up with SEO-friendly solutions like:

- an URL-building approach based on avoiding query parameters in URLs while using search filters and following the URL parsing mechanism when implementing the required URLs;

- making use of metadata;

- introducing a dynamic XML sitemap and more.

Check out the results of our cooperation with Truhoo to get a full picture of what we achieved together.

The Bottom Line

Single-page React applications offer exceptional performance, seamless interactions close to those of native applications, a lighter server payload, and ease of web development.

Challenges with SEO shouldn’t be a reason for you to avoid using the React library. Instead, you can use the above-mentioned solutions to fight this issue. Moreover, search engine crawlers are getting smarter every year, so in the future, SEO optimization may no longer be a pitfall of using React.

Need a reliable partner in custom software development?

We can help you