Though more and more real estate platforms are developing mobile apps, having a web platform is still important. Over 90% of real estate firms have websites, according to the National Association of Realtors (NAR). Web development for real estate ensures that buyers and sellers can easily access property information and services through their browsers. Buying and selling houses are important decisions, so people don’t rely solely on mobile apps. A robust web presence continues to play a crucial role in the real estate market.

Most of them turn on their laptops to search thoroughly. And statistics collected by NAR show that 95 % of all homebuyers are searching online. Online search makes it easy for buyers to compare homes and choose the exact features they’re looking for. To make a strong first impression, a real estate web platform should work flawlessly and be handy for agents, brokers, home sellers, and home buyers.

Yalantis is a technical partner of Zillow, the top global real estate platform. Check out their feedback on the fruitful cooperation between us and Zillow on Clutch. We also have experience developing European real estate marketplaces. Our American and European clients usually come to us inspired by the market success of Zillow, Redfin, and Realtor.com. We’ll begin our post with analyzing this trio in terms of the fundamental aspect of any real estate platform: property listings. They’re key to success, as a low quality of listing data, a lack of data, or duplicate listings will inevitably turn off users.

Where can a real estate platform get data for property listings?

There are three ways of ensuring a flow of real estate listing data to a real estate platform. The first is mainly applicable to the US market, while the others are applicable to any market.

1. Pulling data from MLSs

In the US, MLSs are common and are technologically supported by the National Association of REALTORS® (NAR), which ensures interoperability of data between MLS systems. As of 2025, there were 500 MLS system in the US. Brokers participating in an MLS agree to share their listings with other MLS participants.

MLSs usually send data to their participants and technology partners through the RESO Web API standard. MLSs allow real estate portals like Zillow, Redfin, and Realtor.com to publish MLS listings on their websites.

Unlike in the US and Canada, MLS property technology is poorly developed in other countries. There are some forms of MLSs in European countries, including Germany, Spain, Italy, and the United Kingdom. However, these countries still lack regulations regarding real estate transactions. Consequently, differences in software packages across real estate agencies make it challenging to share data.

How can your platform pull data from an MLS?

MLSs share their data with participating brokers or software technology partners through their own web APIs. Trulia and Zillow get access to local MLSs by negotiating data sharing and syndication agreements. Redfin, on the other hand, doesn’t have any of that headache. As a brokerage, it’s free to use data available through its membership in the Realtors MLS.

In this regard, it seems like Zillow is doing things the hard way. After all, it could become a brokerage and pull data directly from an MLS. However, becoming a brokerage imposes a lot of legal obligations on a company because they then need to participate in sales by representing buyers and sellers within their MLS, which basically means they have to employ agents and manage them across the whole country.

Internet Data Exchange

It’s important to note that in the US, public property details should comply with certain rules established by the NAR and must follow the IDX (Internet Data Exchange) policy. To enable data exchange between an MLS database and your website, you need to integrate IDX.

IDX is a set of standards, rules, and technologies that track real estate data as it moves from an MLS to your platform. IDX moderates how your platform accesses MLS data and how it’s publicly displayed.

Methods of receiving MLS data

Depending on the MLS you need to take data from, your platform can get listings by means of different methods, including iFrame, FTP, and RETS.

The most modern method is the RESO Web API. Agents and MLSs are increasingly adopting this standard. It streamlines the movement of listings and can significantly cut local hosting and security costs. Paired with the RESO Data Dictionary, the RESO Web API standardizes the structure of property listings.

There are also third-party services that provide APIs that normalize data flows from MLSs. You can check out SimplyRets, ATTOM Property API, and Mashvisor API.

What is the simplest way to connect with multiple MLSs?

Because there are hundreds of MLSs all over the US, it’s extremely burdensome to negotiate agreements with all of them, especially if you operate nationwide. That’s why the largest aggregator websites enter into data- sharing agreements with national real estate companies to get their data directly from MLSs.

These companies include 365 Connect (a provider of technology for the housing industry), the RealBird social media and mobile listing marketing platform, and JBGoodwin REALTORS®. ListHub is one of the largest aggregators of real estate listing data in the US and used to be the main source of listings on Zillow and Trulia until their agreement ended in 2015. Now, ListHub collects and distributes real estate data from Realtor.com, Zillow, and other real estate websites.

You would need to do three things to get access to these platforms: get licensed in every

state, join each MLS you need to access, and integrate data that doesn’t follow a consistent standard across the 900+ MLSs.

2. Encouraging agents, brokers, and landlords to publish properties

Zillow is doing fine attracting real estate agents and brokers to their website. The main way the company attracts landlords is by providing them with a convenient tool to manage all their listings in one place. Encouraging real estate professionals to share properties with your platform also has much to do with how you help them promote their services.

When building real estate platforms, we often implement optimized media services, ensuring a platform is capable of simultaneously uploading up to 50 images each up to 20 MB in size for any real estate listing. Such top-notch technical capabilities help agents present properties to potential buyers in detail. To optimize your media service, you can use tools like AWS Lambda.

The list of benefits your platform might provide to real estate workers is mindblowing and requires marketing research. Each successful real estate website uses their own hooks. Rightmove, the top real estate platform in the UK, provides agents with exclusive member benefits like marketing and communication tools, reports, and a telephone lead service so they don’t miss leads’ requests.

3. Encouraging homeowners to publish properties

Zillow also allows FSBO listings, enabling homeowners to list their own homes without the assistance of professional agents. Homeowners can post listings directly on the platform after registering. Listings are verified, and if they don’t violate Zillow’s rules, they’re posted on the platform.

Redfin takes information on for-sale-by-owner listings from Fizber.com and FSBO.com and doesn’t allow users to post FSBO listings directly on their website. But Redfin’s primary data source is MLSs, so in case someone is trying to post a listing on an MLS and as an FSBO, the website will display only the MLS listing. Realtor.com is sceptical about FSBO listings, so it doesn’t allow homeowners to post them on the platform.

What’s interesting, however, is that properties on Zillow don’t necessarily have a “for sale” label. The owner of a property can state the price they’d be willing to sell their home for without actually putting it on the market. This type of listing is called Make Me Move. It’s a great way to measure interest from potential buyers and even get an unexpected offer. FSBO is potentially a great opportunity for emerging real estate businesses in the US and Europe.

Keep in mind that consumers are annoyed with seeing dozens of listings for the same property on real estate platforms. Try to avoid duplication of properties on your platform.

To ensure the uniqueness of listings on real estate platforms we build, we implement a moderation flow. When being published, all properties go through an automated check based on the property address. If the property is suspected of duplication, we pass it to the admin panel for a manual check. Additionally, you should allow agents to claim ownership of a property if they notice other agents offering it too.

The success of a real estate platform is due not only to quality listings but also to other functionality that has to be effectively implemented.

Core Real Estate Platform Features

Creating a real estate web platform isn’t the same as just creating a website with a list of available houses. To attract users to your platform, it should offer something more. We studied the five most popular real estate platforms in the US and the UK and analyzed their main features.

The chosen platforms are Zillow, Trulia, Realtor.com, Rightmove, and Zoopla. All of them have the same core features, which are:

- Registration

- Search filters

- Maps

- Favorites

- Payments

- Messaging

- Calendar

A commercial real estate platform is a place for agents, home buyers, and home sellers. It’s quite a challenge to satisfy all three of these groups. To choose the features you need to focus on, think of different users’ needs. What’s important for home buyers and sellers? How can you make your software platform useful for real estate professionals? Paying attention to the user experience for your platform’s three main groups is key to success.

Let’s start with the very first thing your platform users will have to do – register.

Read also: How We Ensure Stability, Performance, and Maintainability of Your IT Solution Under High Load

Registration

Usually, real estate platforms offer two types of registration: via email and via social login (using information from a social networking service such as Facebook, Twitter, or Google+). You can provide both registration options to let users choose.

Profiles on your real estate platform should let users take different actions – users should be able to switch roles on your website. Today, a user may be searching for a new house, but tomorrow they may want to sell a house. Also, you might add more than buyer and seller roles – for instance, you might add roles for landlords, renters, investors, and others.

You could also provide a separate registration for professional real estate agents, as they use other features than home buyers and sellers.

Filters

We’ve looked through three real estate platforms – Zillow (US), Trulia (US), and Rightmove (UK) – to learn which filters are essential.

The core search filters on these three platforms are:

- Listing type (buy, sell, rent)

- Location

- Price

- Number of bedrooms

- Home type

As for additional filters, the most common ones are:

- Floor space (square feet)

- Bathrooms

- Year of construction

- Date when the house was added to the website

Find out what the main questions or problems are with real estate services in your area. Based on that, consider adding some other useful filters to improve the user experience.

For example, you might add a search by school. It’s very important for lots of families to find a home near the right school. So an advanced school search can improve the user experience. Filters may vary from school type to grade level or even number of students per teacher. The crime rate is another major subject that prospective home buyers want to know, so you could also add filtering by crime rate.

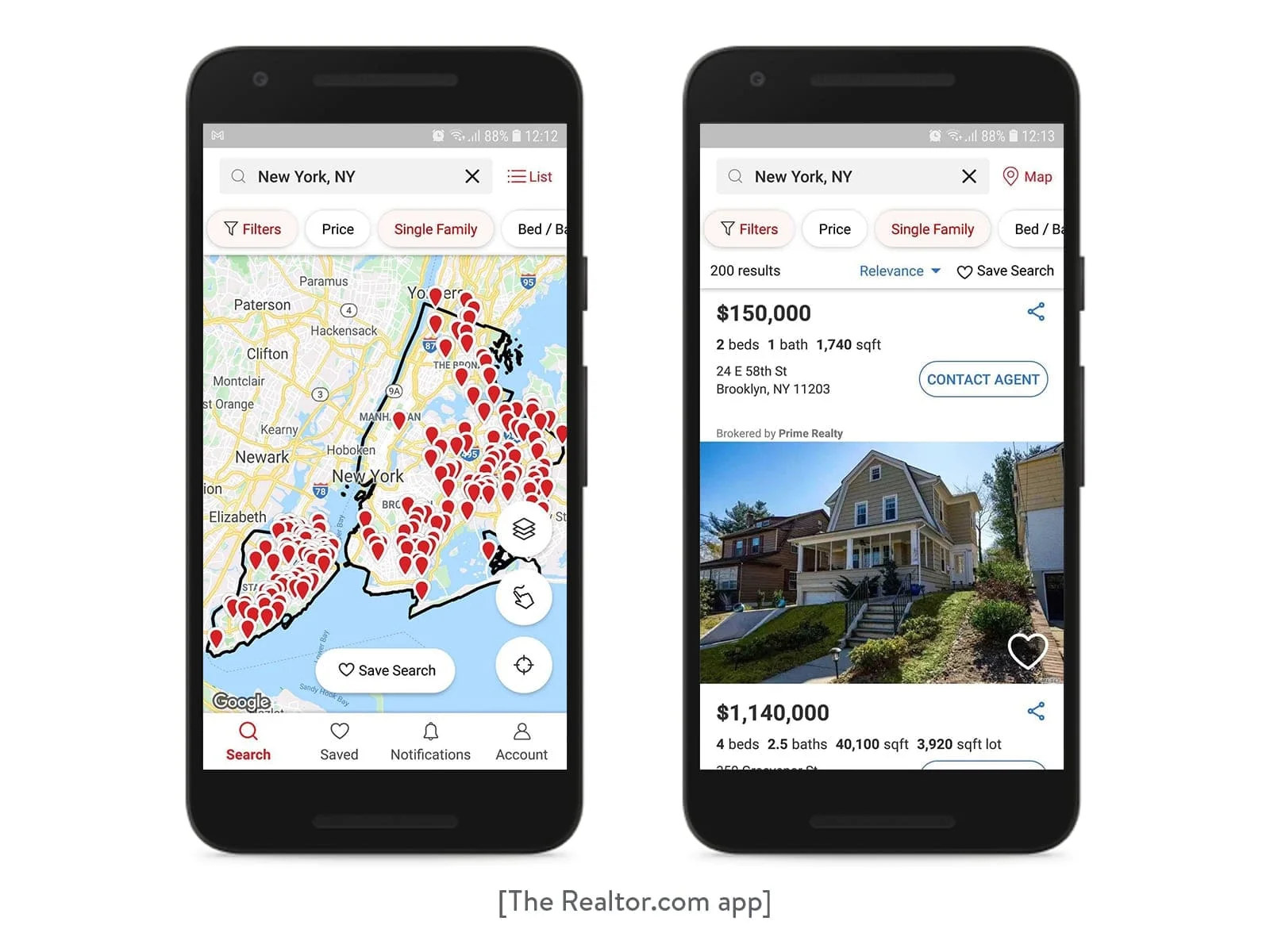

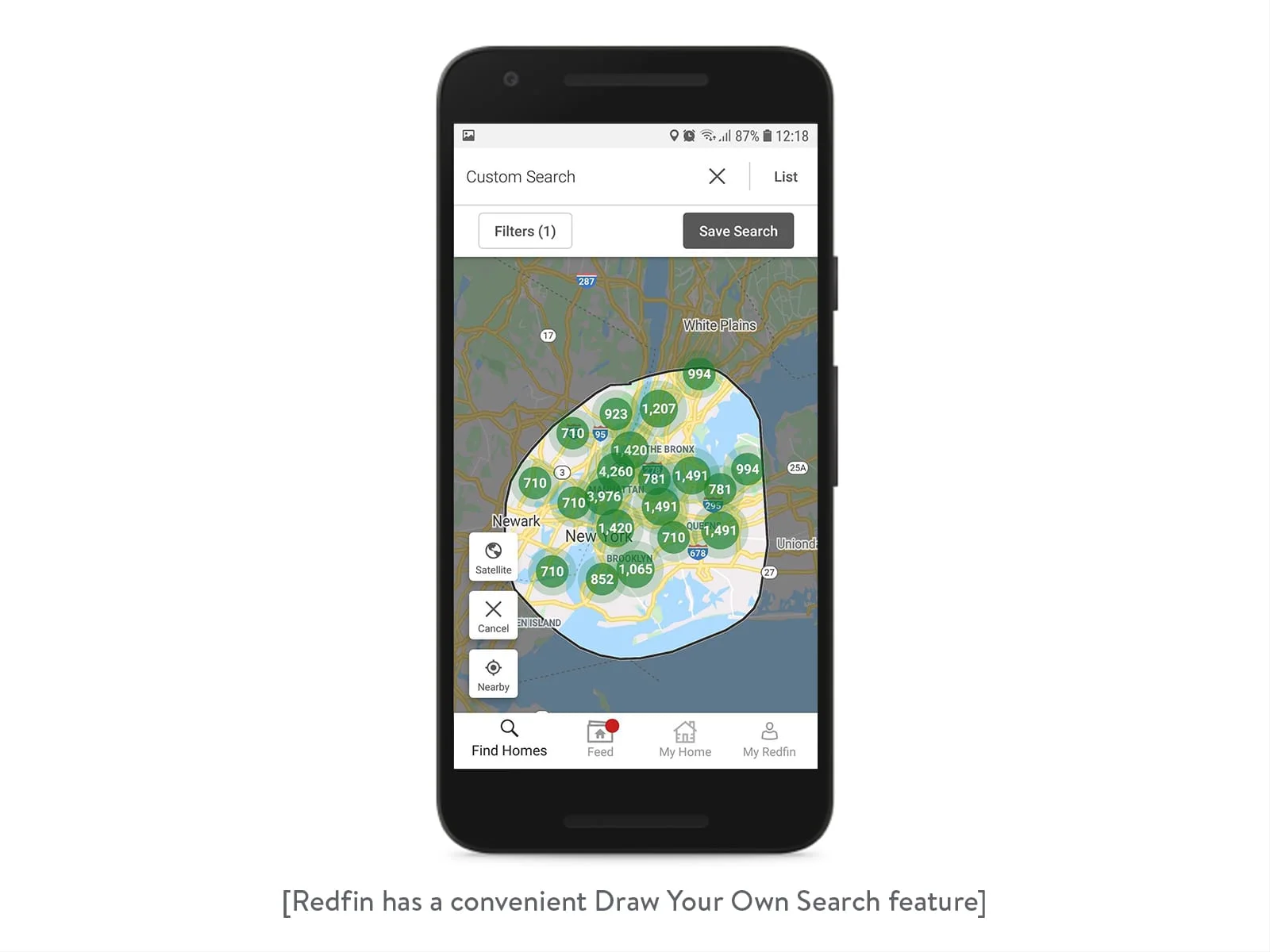

Maps

People are quite used to Google Maps, so it’s a common solution to implement the Google Maps APIs in real estate websites. Besides, you can create a custom view with Google Maps, adding your brand’s colors and icons. You can also add lines to define neighborhoods or regions.

Another advantage of Google Maps is its Street View feature. Street View lets users check how buildings look not only in the photos given on the listing page. Plus, maps let home buyers find out about nearby parks, shops, and parking spots. This saves time for prospective buyers as well as agents.

Favorites

This feature benefits both home buyers and agents. With favorites, home buyers can save their top choices. This lets buyers return to options they’ve added to their favorites and avoid starting their search from the beginning each time they use your site. Users can also see updated information about houses they’ve chosen, such as changes to availability or price.

Agents can see which houses their clients are interested in. Based on these favorite houses, an agent can provide more suitable options for their clients.

Payments

An integrated payment system is another key feature of a real estate platform. Consider integrating PayPal, Braintree, or Stripe. There’s a wide variety of APIs and methods to choose from. Think of the region your platform focuses on and determine the most used payment gateways in that area. Don’t forget that integrating a gateway’s API will place the responsibility for credit card data security on your platform.

Messaging

As the real estate business is about communication, make sure that your platform provides an integrated messenger. Real-time messaging enables a constant connection between a platform and a server so the server knows whether users are online or offline and can make sure messages are delivered instantly. There are a number of ready real-time messaging solutions that you can integrate. We’ll give just a few examples:

- Twilio is a platform that lets you embed voice, chat, and video messaging into web, desktop, and mobile software.

- Layer provides powerful APIs and SDKs that give you total control over backend logic and the flexibility to integrate with existing services. It also enables chat, voice, and video messaging.

- PubNub offers flexible real-time APIs and a global messaging infrastructure.

Calendar

Most real estate platforms have calendars so that realtors and potential buyers can know when houses are open for showings. Real estate website developers can create calendars specially for a platform or implement APIs. You can use the Apple, Google, or Microsoft calendar APIs, for example. Or you can implement an open source calendar that gives you the opportunity to change how it looks. For more information, read our article on how to create or implement a calendar in your app.

Critical Points for Real Estate Website Development

You’ll have lots of things to tackle during real estate website development. We decided to take a look at some of the most common issues that real estate platforms face.

Website speed

First of all, if you want users to spend more time on your platform and revisit it, your website should be fast. The listings feed should load quickly. And, most importantly, all photos should be high resolution yet still load fast. On average, the maximum load time of a web page is 2 seconds. If your site takes longer, you’re in danger of losing potential clients.

Site speed impacts traffic and user engagement. Actually, the longer a page takes to load, the less time is spent on the page on average. Let’s see what you can do to speed up your real estate website.

Optimize images

To speed up your website, you can start by optimizing the images. Real estate portals have plenty of them. Because photos are crucial when it comes to choosing houses, don’t decrease the resolution of images. One solution is to compress images so the quality is preserved while the size is reduced.

Choose your backend programming language and framework carefully to optimize resources. Minimize the use of JavaScript, HTML, and CSS files as they load slowly. 80 percent of end-user response time is spent on downloading images, stylesheets, scripts, Flash, and so on. Reduce the number and complexity of HTTP requests to render web pages faster. The easiest way to do that is to simplify page design. But real estate platforms have rich content. So, one of the most convenient solutions is to use combined files. If your site runs multiple CSS and JavaScript files, you can combine them into single CSS and JavaScript files. That will speed up loading because the number of HTTP requests will be reduced. You can also minify JavaScript, HTML, and CSS by getting rid of line breaks, additional spaces, and comments.

Having a high volume of traffic to your site is great. But you need to choose the right configuration for your web server to support this traffic. When more users than predicted visit your site at the same time, it may slow down or even crash. To avoid such problems, an experienced software development company will use a Content Delivery Network (CDN). A CDN is a network of geographically dispersed servers that allow static content like photos, CSS files, and JavaScript files to load faster. With a CDN, each user connects to the nearest server and thereby downloads static content from the closest source. When data travels shorter distances, your website speeds up, leading to increased profit.

Reduce the number of plugins.

Of course, plugins are useful, as they can add functionality to your site and improve the user experience. Moreover, they help to avoid messiness in code. These advantages prompt developers to add more and more plugins. Bad news is that having a lot of plugins affects the speed of your website and can even cause crashes. Cut unnecessary plugins to improve site speed.

Customer Relationship Management (CRM) system

Having a solid real estate CRM is vital to the success of your business, as the real estate industry is all about relationships and communication. Such a property management system is a tool that automatically manages interactions with clients. And because a client database is one of the most important parts of a real estate business, it should always be up-to-date and well kept.

Sort out your needs before choosing a CRM system. The greatest needs in a real estate business are:

- Contact management

- Marketing tools

- A customizable website with MLS

- Lead tracking

The best CRM system is one that can handle all of your business needs. It’s clear that having a properly working CRM system is key to effective communication with your customers. Zillow and Trulia have their own CRM system – the Premier Agent App. The realtor uses the Top Producer CRM. There are lots of CRM systems; some are generic, while some are created especially for real estate. The most popular are Propertybase, Rethink CRM, and Pipedrive.

Responsive website

A responsive website means that users will have an equally great experience using your platform on different devices, from desktops to smartphones and tablets. A responsive website makes sure that platform data can be accessed across all devices. But there are more reasons why your website should be responsive.

Responsiveness helps increase the number of leads you get from your site as mobile and tablet users also have access to your real estate platform.

Also, Google is quite happy with responsive websites. Actually, Google strongly recommends responsive design. As all responsive website pages have the same URL, it’s simpler to index and crawl them. There’s still another way to attract mobile users to your platform – build a standalone mobile app.

Read also: How to Develop a Real Estate App Like Zillow

Third-party services

In the era of digitalization, there are lots of services that are connected with real estate. For example, mortgage calculators and mortgage comparison tools are essential features of a real estate platform. Homebuyers benefit from these enterprise solutions as they don’t have to look for a mortgage somewhere else. It’s great if buyers can compare rates from different lenders to find the best loan.

A moving service is also a necessary part of changing homes. You could integrate a service in your platform so users can both buy or sell houses and request moving services on your website.

Read also: Writing a Request for Proposal to Your Potential Software Partner

To build a successful real estate website, you should aim not only to design a great listings feed but also to create a community on your platform. Pay attention to the services people typically use when buying, selling, or renting houses. Try to provide all the necessary services so that users don’t need to leave your platform in order to find additional real estate-related information. Choose a real estate web app development company wisely and make sure that it’s able to handle all the problems your platform might face.

Yalantis has experience in building real estate software solutions

See one of our products