Manufacturing lines generate more visual data than any human team can process. A single production shift produces thousands of inspection points, equipment readings, and process events, most of which go unreviewed until something goes wrong. By then, the defect has already shipped, the equipment has already failed, or the safety incident has already happened.

Computer vision in manufacturing provides continuous, real-time visibility into what is happening on the floor at every stage of production. Quality, safety, equipment health, and process efficiency all become measurable and actionable from a single system.

The business case is becoming harder to ignore. According to the World Economic Forum, manufacturers that integrate AI with IoT, cloud, and other technologies outperform peers by 16% or more, and 94% of successful industrial transformations combine multiple technology domains rather than deploying AI in isolation.

This guide covers what computer vision can do in a manufacturing environment, which use cases deliver the clearest return, what it takes to implement a system that works on a real production line, and how Yalantis approaches each stage of the process.

|

Key takeaways

|

Why do you need computer vision in manufacturing

Manual inspection has a ceiling. At a certain production volume, human inspection cannot keep up since attention fades, and some defects are too small or too fast to catch with the naked eye. Computer vision removes that ceiling. Here are the benefits of computer vision in manufacturing:

- Consistent defect detection: A camera-based system inspects every unit at full line speed and applies the same detection criteria from the first unit of the shift to the last. Defect rates drop, and fewer flawed products reach your customers.

- Full traceability: Every defect gets logged with a timestamp, line number, and production context. That data shows you where defects cluster, which equipment is drifting, and what triggered a quality drop, so your team can investigate and mitigate the issue.

- Predictive maintenance signals: Subtle visual changes in equipment output often appear before a breakdown does. The system flags those early, so you schedule maintenance on your terms rather than respond to an unplanned stoppage.

- Reduced dependency on manual inspection: Experienced inspectors are hard to hire and harder to retain. Automating repetitive visual checks frees your team for work that requires judgment and takes quality control off the table when headcount changes.

- Real-time production visibility: Dashboards give your operations team a live view of defect rates, model performance, and line status. Issues surface in seconds, not at the end-of-shift report.

Key computer vision solutions for manufacturing

Manufacturers deploy computer vision systems to solve operational problems that traditional inspection and monitoring methods cannot address reliably. Vision-based systems provide continuous observation of products, equipment, and production processes without slowing operations. Let’s review the central use cases and how they can help you solve the most popular issues that appear during the manufacturing process.

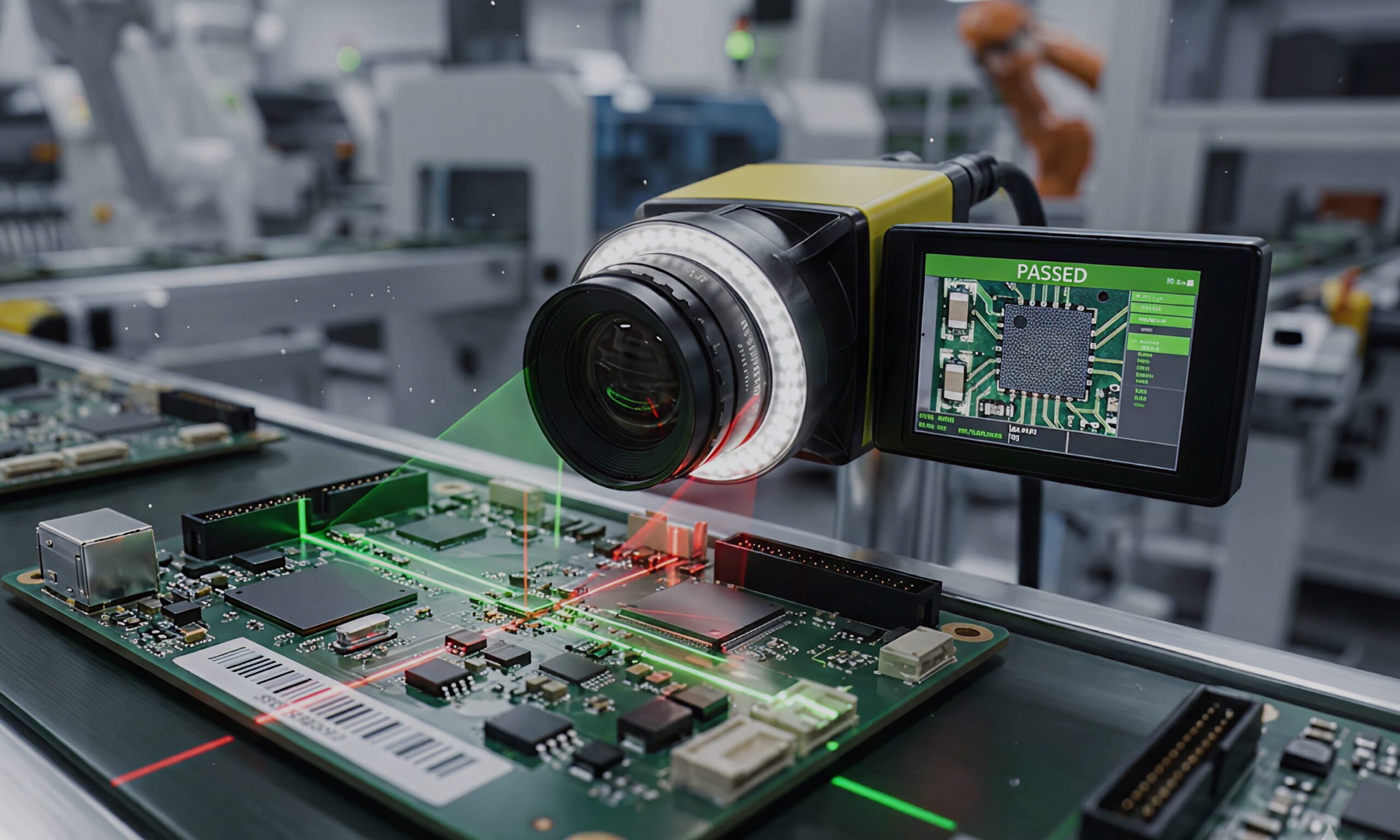

Computer vision for quality control and defect detection

Quality control often depends on manual inspection performed under time pressure and variable lighting conditions. Operators must detect small defects while maintaining production speed. Such circumstances often lead to inconsistent inspection results and missed defects. As production volumes increase, manual inspection becomes difficult to scale without increasing labor costs or slowing the line.

Computer vision systems automate inspection tasks that are difficult to perform consistently at production speed. Manufacturers apply them to such tasks as:

- Surface inspection to detect scratches, cracks, and dents;

- Assembly verification to identify missing or misaligned components;

- Packaging and label inspection to prevent shipment errors;

- Dimensional verification to detect alignment deviations.

In practice, manufacturers deploy inspection systems that combine industrial cameras, controlled lighting, and edge AI devices integrated directly with the production line. Cameras capture images as products pass inspection points, and trained models analyze them instantly. Defective parts can be rejected automatically, while uncertain cases are flagged for operator review. Hybrid setups that combine automation with human validation can reach payback in less than a year.

|

Case in point: Applying computer vision for defect detection A Tier-1 European automotive supplier illustrates this approach. The company struggled to detect micro-fractures in cast aluminum brake components moving along a conveyor at 1.2 m/s, and defects escaped inspection at a rate of 0.8%. Yalantis designed a custom automated optical inspection system with specialized lighting, telecentric optics, and an AI model trained on the client’s defect types. The deployed solution achieved 99.7% defect detection accuracy, increased line speed by 15%, and delivered ROI in eight months, eliminating defect escapes to OEM customers. A comprehensive computer vision solution can significantly improve inspection performance. Defect detection rates may increase by up to 90% compared to human inspection. At the same time, inspection productivity can improve by up to 50%, allowing manufacturers to maintain quality control without slowing production. |

Computer vision for anomaly detection and predictive maintenance

Equipment failures rarely happen without warning. Machines usually show early signs of degradation through subtle changes in motion, temperature, or visible wear. Traditional maintenance relies on fixed schedules or isolated sensor readings, which often fail to capture these early indicators. Poor maintenance strategies can reduce productive capacity by 5% to 20%, while unplanned downtime costs industries around $50 billion each year.

One of the ways to mitigate the problem is to identify deviations from normal equipment behavior and detect developing equipment issues. In this case, computer vision enables continuous visual monitoring of equipment condition and helps detect early signs of failure. Here is how:

- Motion anomaly detection is used to identify abnormal vibration or movement patterns;

- Thermal anomaly detection helps to spot overheating components before failure;

- Visual condition monitoring detects leaks, corrosion, or material buildup;

- Automated gauge and indicator reading tracks analog measurements over time.

Creating such a solution requires combining computer vision with data from Industrial IoT sensors and maintenance logs into a holistic picture of asset health. Vision models establish a baseline of normal behavior and flag visual anomalies as they appear. These signals are correlated with sensor data and maintenance history to support early intervention. AI-driven predictive maintenance can reduce machine downtime by 30% to 50%, extend equipment life by 20% to 40%, and increase asset productivity by up to 20%, while lowering overall maintenance costs by up to 10%.

Computer vision for manufacturing safety

Manufacturing environments contain constant safety risks. Workers operate near heavy machinery, moving vehicles, and hazardous materials where even a small mistake can cause serious injury. Therefore, any safety violations mustn’t go unnoticed until an incident occurs.

What’s more, the financial impact of work-related incidents is substantial. US businesses spend more than $1 billion per week on non-fatal workplace injuries. As of 2022, the overall work-related deaths and injuries cost the government, employers, and employees $1.2 trillion in the US only.

To monitor safety conditions continuously and prevent incidents, manufacturers deploy computer vision systems, which help them with:

- Personal protective equipment (PPE) detection to identify workers without helmets, safety vests, or protective eyewear;

- Restricted-zone monitoring to detect entry into hazardous areas;

- Worker proximity monitoring around moving machinery;

- Personnel authorization checks to ensure only approved workers have access to specific zones or equipment;

- Vehicle interaction monitoring to reduce collisions with forklifts or automated guided vehicles.

Depending on the factory-floor specifics, these use cases are combined to detect possible unsafe situations early and trigger immediate responses before an accident occurs. When a hazard is detected, the system can trigger alarms, notify supervisors, or automatically slow nearby equipment.

Computer vision for process monitoring and production automation

Production lines often run with limited visibility into real operating conditions. Bottlenecks, slowdowns, and process deviations may remain unnoticed until production targets are missed. Manual monitoring provides only periodic insight and cannot capture short disruptions or gradual efficiency losses that accumulate over time.

Computer vision improves production visibility and enables automation by continuously monitoring product flow and process behavior. Manufacturers apply it to tasks such as:

- Production line monitoring, which measures throughput and detects slowdowns;

- Line tracking, which follows product movement along conveyors and identifies interruptions;

- Automated sorting, which classifies products and directs them to the correct destination;

- Vision-guided robotics, which enables picking and placement when precise positioning is required.

Recent advances in computer vision are also expanding the capabilities of industrial automation systems, including robotic arms, automated sorting equipment, and collaborative robots (cobots). According to McKinsey, Industry 4.0 solutions that combine AI and advanced automation can deliver 15% to 30% improvements in labor productivity.

Improvements in object recognition and semantic segmentation allow these systems to identify objects, understand their position in space, and adjust actions accordingly. Instead of following rigid preprogrammed paths, vision-enabled robots can detect tools, components, or empty shelf space and respond to changes in the environment. This capability makes automation flexible and human-robot collaboration safer.

Computer vision architecture for manufacturing environments

Computer vision on the factory floor needs fast processing, stable integration with industrial systems, and predictable behavior under real production conditions.

Edge processing vs cloud processing

Production lines cannot wait for network latency. When a defect appears or a worker enters a restricted zone, the system must react instantly. Edge processing runs models directly on devices near the cameras, and therefore, no data needs to travel to a remote server. In such a way, images are analyzed on-site within milliseconds with no delay and no risk of connection issues. Even resource-constrained hardware can handle real-time inference using approaches such as TinyML for edge IoT devices, which allow models to run efficiently without relying on cloud resources.

Cloud processing is still used, but for different tasks. Teams use it to train models on large datasets, store historical data, and analyze trends across multiple facilities. Some manufacturers also send selected images to the cloud for deeper analysis, where speed is less critical. In practice, most systems split responsibilities: edge for real-time decisions, cloud for analytics and improvement.

Industrial computer vision and the IIoT ecosystem

A vision system becomes useful only when it connects to the rest of the factory. Cameras detect events, but programmable logic controllers (PLCs) control machines, and manufacturing execution systems (MES) track production processes and workflows. IIoT data management and analytics pipelines connect these systems, allowing the vision system to act on what it sees.

For example, a detected defect can trigger automatic part rejection, while a slowdown on the line can be linked to a specific machine. Without this connection, vision remains just monitoring. With it, visual data becomes part of the decision-making process.

Secure and maintainable vision systems

Factory environments change over time. Lighting shifts, equipment wears down, and new product variants appear. Vision models require regular updates to stay accurate, but those updates mustn’t interrupt production or introduce new risks. That is why Secure Boot and OTA update pipelines are essential for maintaining system integrity in live production environments.

On the operational side, teams track model performance continuously, detect accuracy drift early, and retrain models before performance drops affect the line. Security requires the same discipline. When vision systems connect to industrial networks, access must be controlled, and data must be protected against unauthorized changes or outside interference.

How does Yalantis approach computer vision implementation

At Yalantis, we approach computer vision implementation as a structured process, helping you achieve your business goals. Each step focuses on validating value, ensuring technical feasibility, and preparing the solution for real factory conditions.

1. Identifying viable use cases

Our team starts from a comprehensive analysis of your manufacturing workflows to identify where visual data can improve quality, safety, or efficiency. We narrow down to use cases where a visual system can produce a measurable result, whether that’s fewer returns, faster throughput, or fewer line stoppages.

Not every problem on the floor is a good fit for computer vision. We tell you that early, so you don’t spend six months building something that delivers marginal value.

2. Solution design and system setup

Once we agree on the target, our team designs the solution around your production environment. Our engineers plan camera placement and lighting setup to ensure consistent image quality across shifts. We select processing hardware that can detect a defect before it moves to the next station.

The architects from Yalantis also map out how the system connects to your existing infrastructure. The computer vision layer must feed data directly into your manufacturing execution system and trigger your line controllers automatically when it catches an issue.

3. Pilot deployment

Yalantis installs a working version on a real section of your line and runs it under actual production conditions. That is where real issues surface: a reflection may throw off the model, a product variant the camera was not trained on, or a timing mismatch with the conveyor.

We resolve those issues before they become expensive. A problem caught at this stage takes days to fix. The same problem occurs after a full rollout takes months.

4. Scaling to production

With a validated system, we extend it to the full line or across facilities. Each installation gets tuned to local conditions because lighting, equipment, and product mix vary from site to site. Your team gets dashboards showing model performance, defect rates, and live alerts, so you always know whether the system is doing its job.

5. Ongoing model maintenance

A computer vision model is not a one-time setup. Products change, equipment ages, and production conditions shift. A model trained today will drift without regular maintenance.

We run scheduled reviews, update models when accuracy starts to slip, and push changes without stopping production. Your team also gets enough training to flag issues independently, so the day-to-day operation does not depend on us.

Challenges of implementing computer vision in manufacturing

Computer vision systems often break when moved from lab conditions to real production lines. Data gaps, unstable environments, and rigid infrastructure create most failures. Teams that ignore these constraints face low accuracy and long deployment cycles. Let’s review the ways Yalantis mitigates the most common implementation challenges.

Data availability and model training

Manufacturers rarely have enough defect data. Critical defects appear infrequently, but missing even one can cause major losses. Models trained on limited datasets fail to detect edge cases.

Yalantis builds datasets directly from production. Teams collect real images, label defect types, and generate synthetic samples to cover even rare scenarios. Engineers retrain models as new defects appear, so performance reflects actual conditions on the line.

Factory-floor conditions

Factory environments distort visual input. Lighting shifts, reflective surfaces create glare, and dust or vibration affects image quality. These factors reduce model accuracy even when the model itself is well trained.

To mitigate this issue, Yalantis experts stabilize image capture before applying AI. Engineers design optical setups with controlled lighting, industrial enclosures, and camera configurations adapted to each line. Stable input leads to stable detection.

Integration with legacy equipment

Production lines rely on tightly connected systems that control every step of the process. Machines are managed by PLCs, which execute commands such as start, stop, or reject a part. MES handles the production flow and tracking. Any new system must interact with both without breaking existing logic.

Yalantis integrates computer vision directly into this setup. When the system detects a defect or anomaly, it sends a signal to the PLC, which can immediately reject a part or adjust machine behavior. At the same time, inspection results are recorded in the MES to keep production data consistent. This approach allows manufacturers to add vision capabilities without redesigning the entire production line.

Accuracy and reliability requirements

Manufacturing systems cannot tolerate unstable performance. A single false positive can stop the line. A missed defect can pass downstream and cause larger failures. Performance must stay consistent even as conditions on the line change.

Yalantis monitors model performance in production and retrains models if accuracy starts to drop. Edge deployment keeps response times fast and predictable, even when network connectivity is limited.

Why should you choose Yalantis?

Yalantis builds computer vision systems that operate reliably on real production lines. Here is what sets us apart:

- We design each solution around your specific production conditions rather than applying a generic template.

- Lighting setup, product variability, line speed — all of it gets accounted for before we write a line of code.

- With over 500 engineers experienced in AI, IoT, and embedded systems, we cover both software and hardware in-house, so you work with one team instead of coordinating between vendors.

- Over 17 years of engineering experience and more than 150 delivered projects mean we have already solved the complex problems that come with deploying vision systems in real factory environments.

- Enterprise clients such as Toyota Tsusho, Bosch, and KPMG trust us with production-critical systems, which reflects our ability to deliver under demanding conditions and at scale.

- More than 40 end-to-end IoT deployments give us the integration depth to connect vision systems with your existing PLCs, MES, and production infrastructure without disruption.

- Certified processes across ISO 9001, ISO 27001, and ISO 27701 ensure every solution we deliver meets enterprise security, quality, and compliance requirements.

- We stay involved after go-live. Model performance is monitored continuously and updated as your production conditions change, so the system stays accurate over time.

FAQ

How much does computer vision for manufacturing cost to implement?

Cost depends on the scope of the use case, the number of inspection points, and the level of integration with existing systems. A significant portion of the cost comes from hardware, including cameras, edge processing units, and installation across inspection points. Therefore, a focused pilot covering one inspection station costs significantly less than a facility-wide deployment. Most projects are scoped in phases, so you validate ROI before committing to a full rollout.

How long does it take to deploy computer vision solutions for manufacturing on a production line?

A pilot deployment typically takes 8 to 16 weeks from use case confirmation to live testing. Full-scale rollout depends on the number of lines and facilities involved. Projects with complex legacy integration or limited defect data take longer.

Do we need to replace existing manufacturing equipment to implement computer vision technology?

No. Computer vision systems integrate with your existing PLCs, MES, and production infrastructure. New hardware is limited to cameras, lighting, and edge processing devices installed alongside what you already run.

However, existing hardware does not always meet the requirements for reliable vision performance. In such cases, teams may need to upgrade specific components, and when that happens, Yalantis localizes the changes so that you don’t need to replace entire production lines.

How does computer vision AI for manufacturing compare to traditional quality control methods?

Traditional quality control methods rely on human inspectors who tire over long shifts and apply criteria inconsistently. Computer vision AI applies the same detection logic to every unit at full line speed. Detection rates improve by up to 90% compared to manual inspection, which directly reduces human error and cuts operational costs tied to returns and rework.

Can machine vision systems handle harsh factory environments with dust, heat, or vibration?

Yes, but it requires proper engineering upfront. Industrial enclosures protect cameras from dust and moisture. Controlled lighting compensates for glare and shifting ambient conditions. Lens and mounting configurations account for vibration. The physical setup matters as much as the deep learning algorithms running behind it.

What computer vision use cases in manufacturing deliver the fastest ROI?

Computer vision applications in manufacturing, such as defect detection and workplace safety monitoring, tend to deliver the clearest and fastest return. Defect detection reduces material waste and rework costs immediately. Safety monitoring reduces incident-related costs and insurance premiums. Both use cases produce measurable outcomes that are straightforward to track against the investment.

What data do we need to train a computer vision model for industrial inspection?

The system needs labeled images of both acceptable products and known defect types. Manufacturers rarely have enough defect images at the start, so teams supplement real production data with synthetic samples generated through image processing techniques. The more varied the training data, the better the model handles edge cases on the line.