Key takeaways:

- With IoT devices set to reach 29 billion by 2030, reliable IoT device testing is more critical than ever.

- IoT systems have four layers that all need testing: physical devices, connectivity, cloud platforms, and user apps.

- There are 8 types of IoT testing, including functional, performance, security, and usability.

- Security testing in IoT goes beyond the software layer. Firmware, hardware interfaces, and cloud APIs all need to be covered.

- Performance testing is the most demanding part as it ensures the system holds up under real-world loads and stress.

- As IoT systems grow, manual testing must be replaced or supported by IoT automation testing.

- An IoT testing framework combines tools, scripts, and scenarios into a structured, repeatable IoT QA process.

- A common testing stack uses JMeter, Telegraf, InfluxDB, and Grafana working together.

- Companies like Toyota and RAK Wireless avoided costly failures by adopting structured performance testing early.

- Internet of things software testing is more complex than regular software testing because it covers hardware, firmware, protocols, and real-time data.

- In regulated industries, compliance needs to be built into the testing process from day one, not treated as a final review step.

- Testing requirements differ by industry: healthcare IoT is driven by patient safety, industrial IoT by OT constraints, and logistics IoT by the challenges of operating at scale across unreliable networks.

- Embedding testing into your development lifecycle from the start saves time, money, and user trust.

The number of Internet of Things (IoT) devices worldwide is set to reach 29 billion in 2030 according to research firm Transforma Insights. These IoT devices include a wide range of items, from medical sensors, smart vehicles, smartphones, fitness trackers, and alarms to everyday appliances like coffee machines and refrigerators.

For IoT manufacturers and solution providers, ensuring that every device functions correctly and can handle increasing loads is a significant challenge. This is where Internet of Things (IoT) testing steps in. It ensures that IoT devices and systems meet their requirements and deliver expected performance.

In this guide, we explore IoT testing and quality assurance (QA), from the importance of comprehensive testing for complex IoT systems to expert insights on building end-to-end testing environments and real-world examples of companies that got it right. Let’s get into it.

The vital role of IoT testing

The success of connected products largely relies on thorough testing and quality assurance. IoT testing ensures that devices work together smoothly in real-world situations, even as systems expand. It’s not just about connectivity — testing must guarantee flawless, efficient, and secure functionality despite unexpected challenges.

In fact, rigorous testing is necessary to:

- confirm that functionality, reliability, and performance meet expectations, even as systems scale

- identify security flaws and vulnerabilities that could lead to safety or privacy risks

- build trust in the system among stakeholders and users

IoT systems combine hardware, software, networks, and complex data flows that interact with the physical world. Given their complexity, each component requires thorough testing. Let’s take a closer look.

IoT systems: core components and complexity

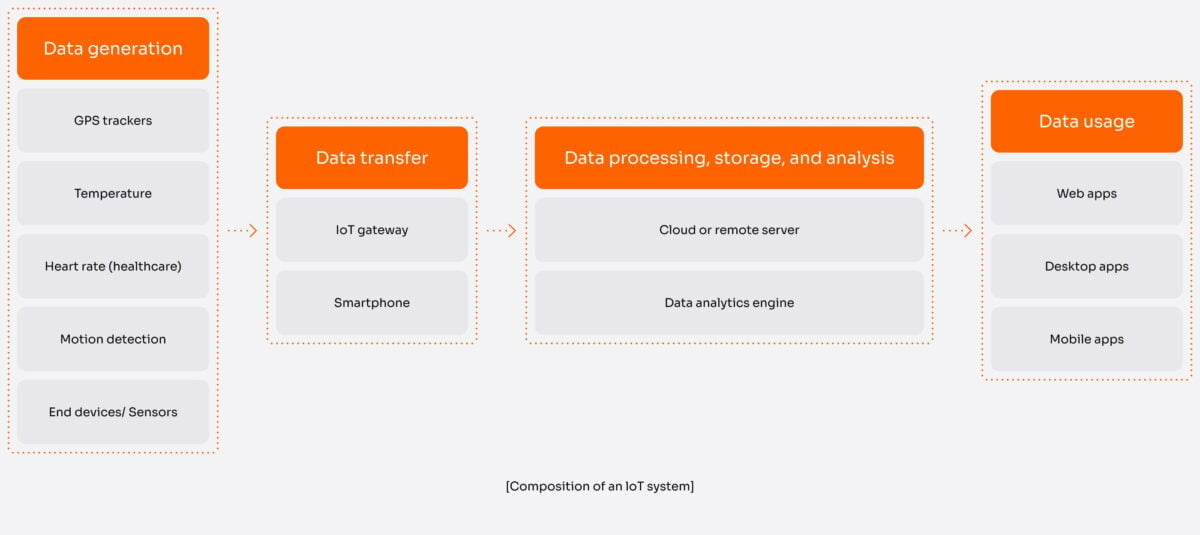

A typical IoT system consists of four main components, each of which should be tested.

- End devices (sensors and other devices that are the things in IoT technology)

- Specialized IoT gateways, routers, or other devices serving as such (for example, smartphones)

- Data processing centers (may include centralized data storage and analytics systems)

- Additional software applications built on top of gathered data (for example, consumer mobile apps)

Together, these components form a multi-layered architecture that constitutes every IoT solution:

- The things (devices) layer includes physical devices embedded with sensors, actuators, and other necessary hardware.

- The network (connectivity) layer is responsible for secure data transfer between devices and central systems, handling communication protocols, bandwidth, and data security.

- The middleware (platform) layer is the core of the IoT system, providing a centralized hub for data collection, storage, processing, and analytics. Notable middleware platforms include Microsoft Azure IoT, AWS IoT Core, Google Cloud IoT, and IBM Watson IoT.

- The application layer is where users interact with the IoT system. It converts device data into useful insights through user interfaces.

Incorporating IoT services into this architecture is essential at every layer. Good integration work ensures data moves reliably between devices and systems, security is addressed by design rather than added later, and the analytics layer has clean, consistent inputs to work with. Done well, it leads to a system that performs predictably and scales without unpleasant surprises.

Our embedded development services help you integrate sensors, firmware, and hardware from the ground up.

Complexities introduced by multi-layered IoT architecture

Such a multi-layered architecture brings several complexities:

- Diverse devices that require seamless interaction. An IoT system can comprise devices from different manufacturers, each with unique specifications, firmware, and behavior. For example, a smart home system may incorporate lightbulbs from Philips, door locks from Yale, and sensors from Bosch.

- Coexistence of various communication protocols. For instance, a wearable device may use Bluetooth to connect to a smartphone but need a Zigbee home gateway to link to the cloud. Since each protocol has a distinct transmission rate, ensuring seamless device compatibility with all these protocols is essential.

- Real-world integration challenges. IoT systems must seamlessly integrate data from different platforms and devices. For example, an industrial monitoring system needs to unify data from sensors and machines, which often involves dedicated IIoT data management to handle integration effectively.

- Real-time data processing. Many IoT applications require real-time data processing and large-scale analytics. This is what makes IoT application development one of the more technically demanding parts of building a reliable connected system. For instance, a fleet management system must analyze real-time data from thousands of vehicles to optimize performance.

- Adaptation to new standards. As new wireless protocols like Thread or IEEE 802.11ah emerge, IoT ecosystems need to adapt and evolve.

These complexities emphasize the importance of IoT testing, and we’ll now explore various forms of IoT testing in detail.

From firmware to cloud analytics, we handle all the complexities of IoT development. Let’s bring your connected product vision to life.

Crucial IoT testing types

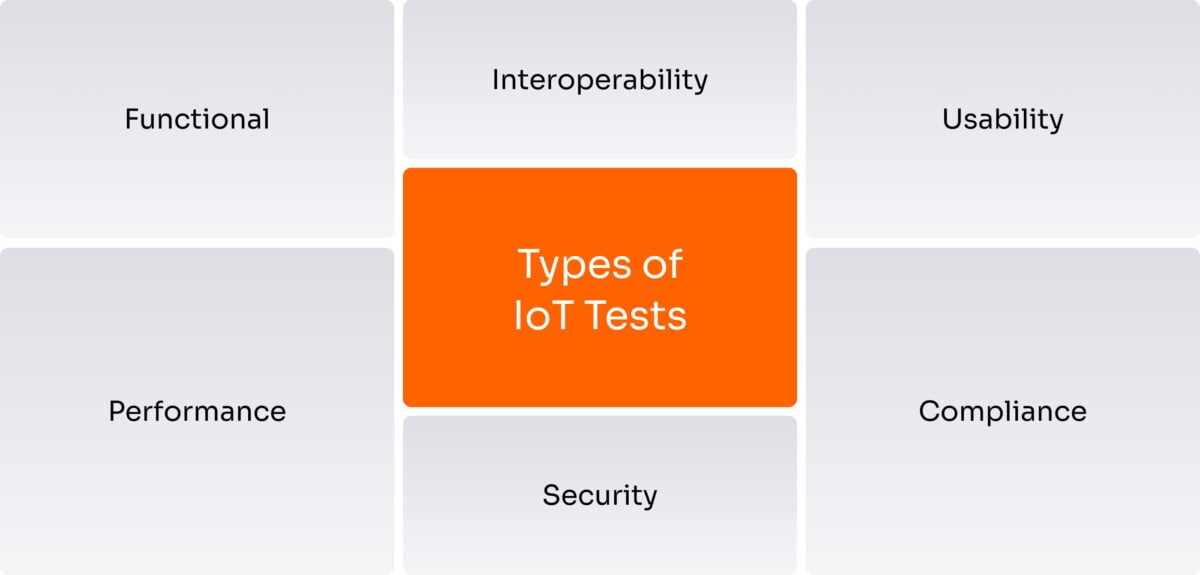

The main types of testing for IoT systems include:

- Functional testing ensures that the IoT solution works as intended by testing device interactions and user interface (UI) performance.

- Performance testing for IoT systems checks responsiveness, stability, and speed under typical and peak loads.

- Compatibility and interoperability testing ensures smooth communication between different devices, platforms, and protocols.

- Security testing identifies security vulnerabilities that could expose sensitive data and lead to safety or privacy risks.

- Usability testing evaluates real user interactions to enhance the user experience (UX).

- Compliance testing validates adherence to industry standards and government regulations.

- Network testing checks communications protocols like MQTT, Zigbee, and LoRaWAN.

- Resilience testing verifies system reliability under adverse conditions like hardware failures.

All types of testing in IoT have their place in the product life cycle, but we’ll zero in on performance testing. This is vital for IoT systems to ensure they effectively handle real-world data, users, and connectivity demands, staying responsive as demand grows.

Having covered the significance of IoT testing and its various types, it’s time to explore how to actually perform IoT testing.

IoT security testing

Security sits in a different category from most other IoT testing concerns. A performance issue means slower response times. A security failure in a connected device can mean something goes wrong in the physical world: medication delivered at the wrong dose, an industrial process interrupted by a remote attacker, a vehicle system behaving unexpectedly. The software-only mindset doesn’t transfer, and neither does a purely reactive approach to finding vulnerabilities.

This is one of the areas where working with an experienced IoT testing services partner makes a measurable difference. Knowing which attack surfaces to prioritize, which standards apply to your product, and how to structure testing so that findings are actionable rather than just documented, takes time to learn from scratch.

That’s why IoT security testing needs to be treated as a discipline in its own right, not a checklist item at the end of the project. A useful starting point is the OWASP IoT Top 10, which catalogs the most common and consequential security weaknesses found in connected devices: things like weak default credentials, unencrypted communications, and insecure update mechanisms. Working through this list systematically is a good way to make sure you’re not missing the obvious before moving on to more targeted testing.

What IoT security testing actually covers:

- Firmware analysis. Examining device firmware for hardcoded credentials, insecure boot sequences, and outdated libraries with known vulnerabilities. This usually involves some degree of binary analysis and cross-referencing against CVE databases.

- Network and protocol security. Checking how the device communicates: whether MQTT messages are authenticated, whether Zigbee traffic can be intercepted, whether the device accepts connections it shouldn’t.

- API and cloud endpoint security. Verifying that the cloud backend enforces proper access controls and doesn’t expose data or functionality to unauthorized requests. Misconfigured APIs are a surprisingly common weak point.

- Physical access testing. Checking whether hardware interfaces like UART or JTAG can be used to extract data or modify firmware. If someone has physical access to the device, how much damage can they do?

- OTA update security. Confirming that firmware updates are signed and verified before installation, and that the update process can’t be abused to push unauthorized code.

- Penetration testing. Taking a step back from individual components and asking: what’s the most realistic path an attacker would take? Simulating that end-to-end helps surface vulnerability chains that component-level tests might miss.

For products in regulated sectors, security testing also needs to map to specific standards. The table below covers the most widely referenced ones.

|

IEC 62443 |

Security requirements for industrial control systems; defines security levels and what testing is required at each level |

|

OWASP IoT Top 10 |

A practical reference for the most common IoT security vulnerabilities |

|

NIST SP 800-213 |

US federal guidance on IoT device cybersecurity; relevant beyond government procurement |

|

ETSI EN 303 645 |

Consumer IoT security baseline, increasingly referenced by EU regulators as a minimum bar |

|

ISO/IEC 27001 |

Information security management; applicable to the cloud and data processing parts of an IoT system |

One thing a checklist can’t replace is threat modeling. Before running tests, it’s worth asking who is actually likely to try to attack this device, and how. The threat profile for a consumer product is fundamentally different from that of an industrial controller or a medical device. Starting from that question helps you focus testing where the real risk sits, rather than treating every product as if it faces the same adversaries.

Not sure where your biggest security exposure sits?

Our IoT security services team can help you find out.

IoT compliance testing

For IoT products in regulated industries, passing your internal QA process is only part of the picture. Regulatory compliance is a product requirement with its own documentation obligations, its own evidence standards, and in many cases its own deadlines tied to market entry. It can’t be addressed at the end of the project.

A concrete example: a connected medical device can perform exactly as intended in every functional and performance test, and still fail an FDA review if the testing process wasn’t documented correctly. An industrial sensor can hit every performance benchmark and still be blocked from deployment in certain environments if it lacks the right certification. Getting this wrong late in a project is expensive. Getting it right from the start usually isn’t.

Compliance testing asks a different question than functional testing. It’s not “does this work?” but “does this satisfy the specific requirements the regulator cares about?” That distinction affects how you write test cases, what evidence you collect, and how you structure your documentation throughout the project.

Compliance requirements by industry:

|

Industry |

Key standards & regulations |

What compliance testing covers |

|

Healthcare |

FDA 21 CFR Part 11, MDR, IEC 62304, HIPAA, ISO 13485 |

Software lifecycle documentation, audit trails, data integrity, cybersecurity by design |

|

Industrial / OT |

IEC 62443, IEC 61508, NIST CSF, CE marking |

Functional safety, security level verification, network segmentation, resilience under attack |

|

Automotive |

ISO 26262, UNECE WP.29, ISO 21434 |

Functional safety of electronic systems, vehicle cybersecurity management, OTA update security |

|

Logistics & Supply Chain |

GS1 standards, GDP, GDPR |

Data accuracy, chain-of-custody traceability, temperature and condition monitoring integrity |

The teams that handle this most smoothly are the ones that treat compliance as part of their testing process rather than a separate audit track. That means mapping regulatory requirements to test cases early, keeping traceability documentation current as the product changes, and making sure the evidence you’d need for a submission is being generated naturally as part of normal QA rather than reconstructed under pressure before a deadline. For many of our clients, this is where having a testing partner with direct experience in regulated industries makes the biggest practical difference.

What does it take to test IoT solutions?

In some sense, IoT testing is no different than the testing process of any web or desktop software application. To spot and reproduce an issue, you need to simulate the scenario in which it occurs on devices found within the IoT ecosystem. Given the variety of IoT devices that may be part of an ecosystem, IoT testing is more difficult than simply creating a script and running it as you would test a mobile app.

To provide IoT product testing services, QA engineers usually spend hours planning and setting up the infrastructure. Then, it takes time to hone the testing process, report on the progress, and analyze the results. Of special concern is the process of building testing standards for end-to-end testing, as QA engineers need to develop auxiliary software solutions for IoT load testing to emulate a large number of specific devices while preserving their essential characteristics.

How can you perform IoT testing?

To make sure an IoT ecosystem works properly, we need to test all of its functional elements and the communication among them. This means that IoT testing covers:

- Functional end device firmware testing

- Communication scenarios between end and edge devices

- Functional testing of data processing centers, including testing of data gathering, aggregation, and analytics capabilities

- End-to-end software solution testing, including full-cycle user experience testing

Approaching IoT testing solutions, service providers should decide on the IoT tests to apply and prepare to combine different quality assurance types and scenarios.

IoT testing involves a full range of quality assurance services. The way engineers test the system depends on its current level of maturity, the assets available to perform testing, and the requirements formed by the product team.

Manual QA for functional testing

When it comes to testing IoT solutions, manual testing plays a crucial role in ensuring that all functional requirements are met. Manual QA engineers perform various tasks in IoT projects, such as:

- setting up functionality to test on real devices as well as emulators or simulators

- running tests on both real devices and emulators or simulators

- balancing tests on real devices with tests on emulators or simulators

Manual functional testing remains essential for IoT projects and is applicable in early development stages and throughout product refinement. However, due to the increasing complexity of IoT ecosystems, IoT automation testing becomes a necessity for most solutions.

Automating tests for complex IoT systems

Here are some best practices for implementing IoT automation testing in complex systems:

- Select the right tests to automate. Not all tests benefit from automation. Focus first on repetitive tasks that are time-consuming, frequent, and tedious to perform manually.

- Invest in tailored IoT testing tools. Choose testing platforms designed specifically for IoT protocols, devices, and architectures. Look for capabilities like network emulation and virtual device simulation.

- Modularize for maintainability. Break tests into modular, reusable scripts. This simplifies updating as requirements evolve.

- Simulate real-world conditions. Mimic real-life scenarios like varying network states, unexpected device failures, and different data loads. This builds resilience.

- Integrate into CI/CD pipelines. Include Internet of Things automated testing in continuous integration and deployment workflows. This enables rapid validation of code changes.

- Ensure scalability. As the IoT system expands, tests must scale smoothly, such as through cloud-based parallel testing.

- Regularly maintain tests. Review and update tests frequently as the system changes to keep them relevant.

- Provide detailed failure alerts. Automated tests should instantly alert developers of any failures with logs and descriptions to enable quick diagnosis.

- Go for virtual test environments. Virtual IoT simulations allow for efficient testing without physical devices and infrastructure.

- Incorporate real-world user feedback. Gather user feedback through monitoring and surveys. Prioritize issues based on importance, and focus on demonstrating responsiveness.

Automation is especially valuable in the realm of performance testing. Let’s explore why.

IoT test automation for performance testing

Automation becomes a requirement as IoT systems scale. This scaling involves more devices generating vast amounts of data, often resulting in system degradation, various issues, and bottlenecks.

To identify and replicate issues related to system performance in scenarios with substantial data generation and sharing, we can use the following types of testing:

- Volume testing. To conduct volume testing, we load the database with large amounts of data and watch how the system operates with it, i.e. aggregates, filters, and searches the data. This type of testing allows you to check the system for crashes and helps to spot any data loss. It’s responsible for checking and preserving data integrity.

- Load testing checks if the system can handle the given load. In terms of an IoT system, various scenarios can be covered depending on the test target. The load may be measured by the number of devices working simultaneously with the centralized data processing logic or by the number of end devices or packets a single gateway handles. The metrics for measuring load include response time and throughput rate.

- Stress testing measures how an IoT system performs when the expected load is exceeded. In performing stress tests, we aim to understand the breaking point of applications, firmware, or hardware resources and define the error rate. Stress testing also helps to detect code inefficiencies such as memory leaks or architectural limitations. With stress testing, QA engineers will understand what it takes for a system to recover from a crash.

- Spike testing verifies how an IoT system performs when the load is suddenly increased.

Endurance testing checks if the system is able to remain stable and can handle the estimated workload for a long duration. Such tests are aimed at detecting how long the system and its components can operate in intense usage scenarios or without maintenance. - Scalability testing measures system performance as the number of users and connected devices grows. It helps you understand the limits as traffic, data, and simultaneous operations increase and helps you predict if the system can handle certain loads. If the system breaks, there may be a need to rework its architecture or infrastructure.

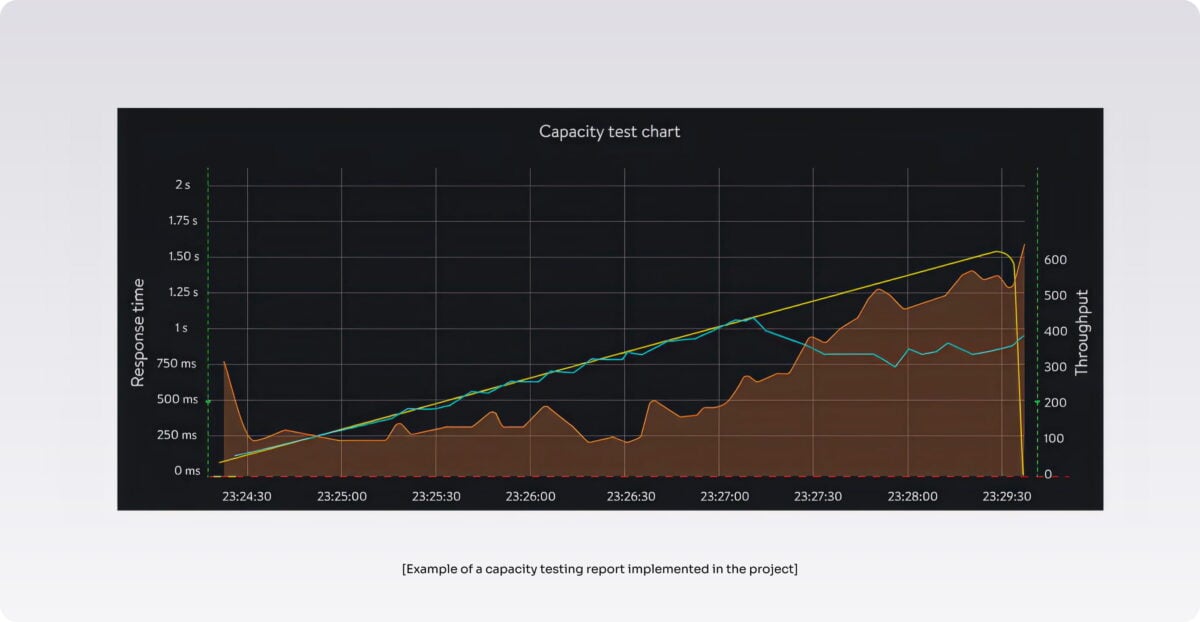

- Capacity testing determines how many users and connected devices an IoT application can handle before either performance or stability becomes unacceptable. Here, our aim is to detect the throughput rate and measure the response time of the system when the number of users and connected devices grows.

Running these types of performance tests becomes crucial at some point in every IoT project’s life. At the same time, checking the performance of a complex IoT system is itself a challenge, as it involves deploying complex infrastructure and programming a number of simulators and virtual devices to mimic an IoT network.

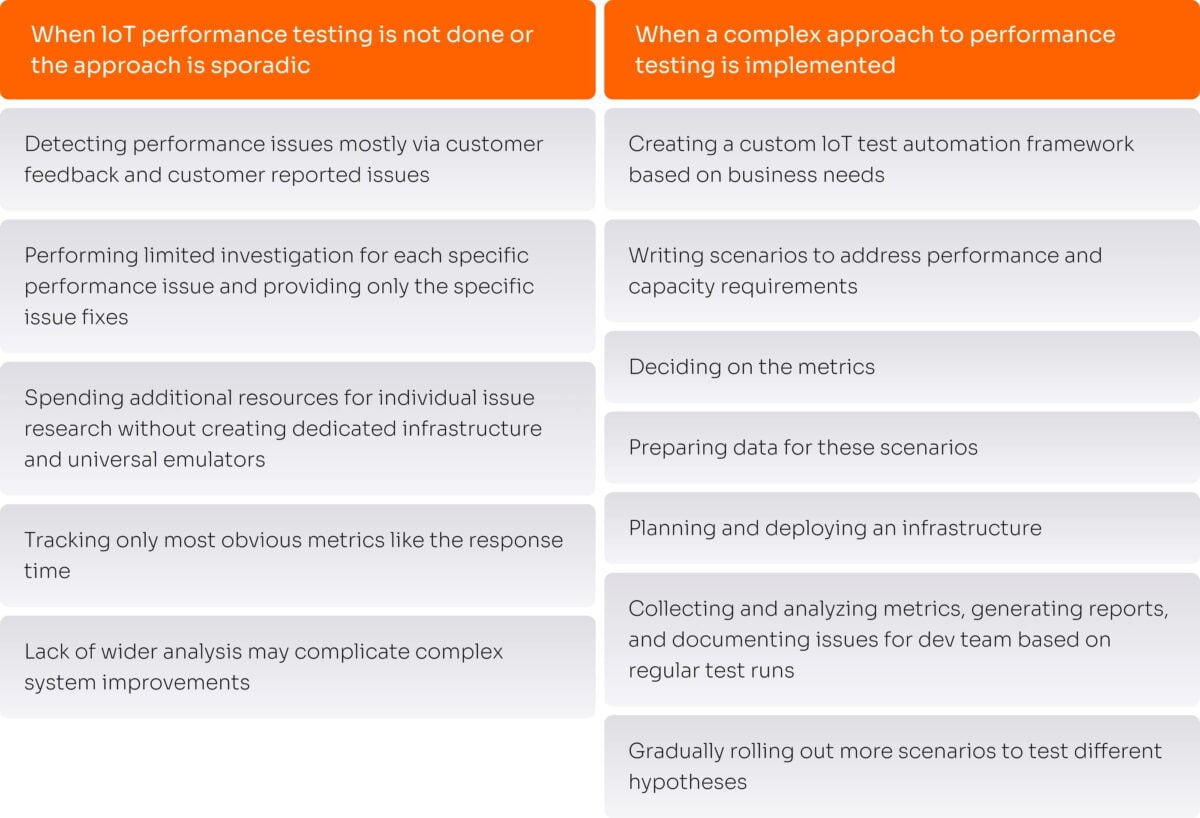

Why we focus on performance testing

To understand the importance of performance testing for IoT projects, let’s compare what the QA process looks like without IoT device performance testing and with a complex IoT testing strategy.

At Yalantis, we provide IoT testing services for projects of different scales and complexities. Among our clients are well-known automotive brands and consumer electronics companies, manufacturers of IoT devices, and vehicle sharing startups. Their experience has proved that performance testing is an essential component of the success of every IoT system.

Embedding performance testing into the process of IoT software development requires a systematic approach. To make regular testing a part of your software development lifecycle (SDLC), we advocate implementing an IoT testing framework.

Internet of Things testing framework

An Internet of Things testing framework is a structure that consists of IoT testing tools, scripts, scenarios, rules, and templates needed for ensuring the quality of an IoT system.

An IoT testing framework contains guidelines that describe the process of testing performance with the help of dedicated tools. In a nutshell, implementing a performance testing framework helps mature IoT projects approach QA automation in a complex and systematic way.

Although an IoT test automation framework establishes a system for regular performance measurement and contributes to continuous and timely project delivery, there are cases when it’s optional and cases in which such an approach to IoT quality assurance is a must. The latter include:

- Projects with average loads

- Projects with high peak loads (during specific hours, time-sensitive events, or other periods)

- Rapidly growing projects

- Projects with strict requirements for fault tolerance; critical systems

- Projects sensitive to response time (solutions where decision-making relies on real-time data)

How to implement an Internet of Things testing framework

Quality assurance is an essential part of the SDLC. An IoT testing framework is usually implemented based on the following needs of an IoT service provider:

- Define the current load the system can handle

- Set up and measure the expected level of system performance

- Identify weak points and bottlenecks in the system

- Get human-readable reports

- Automate performance testing and conduct it regularly

A typical flow for the IoT testing process includes four consecutive steps.

- Collect business needs

- Create testing scenarios

- Run performance tests

- Based on the needs specified, address issues and decide on possible improvements

A typical team for performance testing an IoT project, considering the full IoT testing process, should include the following specialists:

- A business analyst who’s in charge of understanding the needs of the IoT business and assisting with defining the scope of usage scenarios as well as the context in which they are applicable, key metrics, and customer priorities

- A solution architect to set up and deploy IoT testing infrastructure that covers necessary scenarios

- A DevOps engineer to streamline the process of IoT development by dealing with complex system components under the hood (for example, implementing or improving container mechanisms and orchestration capabilities for more effective CI/CD)

- A performance analyst (QA automation engineer) who’s responsible for engineering emulators for performance testing, running the tests, and measuring target performance indicators

Depending on the project’s complexity and maturity, the team may be extended by involving a backend engineer, a project manager, and more performance analysts.

What tasks can an IoT testing framework solve?

“What our clients usually lack in their IoT testing methodology is regular assurance and reporting. A performance testing framework can bridge these gaps and is easily aligned with the company’s business processes.”

– Alexandra Zhyltsova, Business Analyst at Yalantis

Technically, a framework is a set of performance testing tools connected within a system to help IoT testing companies achieve the expected results, i.e. ensure stable performance of an IoT system in different scenarios.

With an IoT testing framework, QA engineers and IoT developers receive a common tool with the following capabilities:

- Setting up load profiles

- Ensuring test scalability as the system grows

- Providing real-time visual representations of test results

Let’s overview the structure of a typical Internet of Things testing framework.

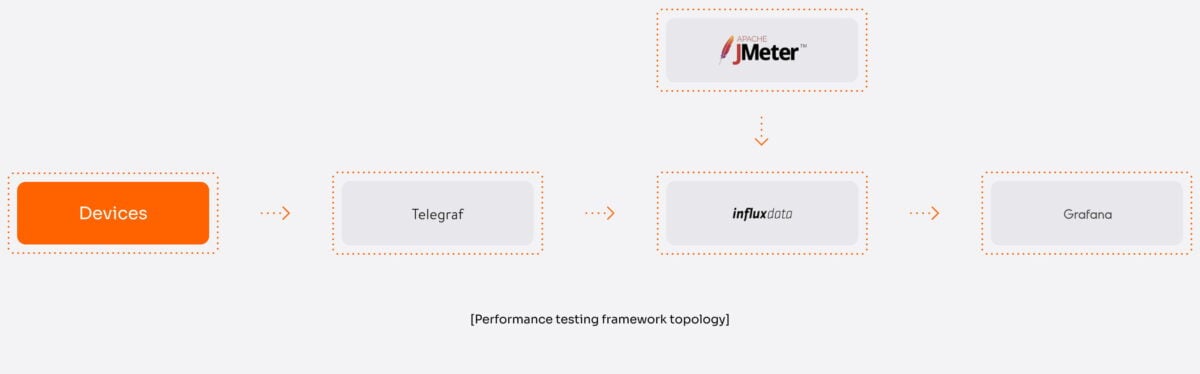

IoT testing framework infrastructure

When deciding on the IoT testing infrastructure, a QA team starts by analyzing the given IoT system. Taking into account the specific requirements within the scope of performance testing, they decide on the tools to use and establish communication between them to receive the desired outcome, i.e. a detailed report of performance metrics. Although framework infrastructure differs from case to case, the aim is always to collect, analyze, and present data.

A typical process of IoT testing for performance measurement within the implemented framework includes the following steps:

- Data is collected from resources within the IoT system (devices, sensors).

- A third-party service (Telegraf) is used to collect server-side metrics like CPU temperature and load.

- Collected data is sent to a client-side app capable of analyzing and reporting performance-related metrics (for example, Grafana).

- An Internet of Things testing tool for performance measurement generates the load and collects the necessary metrics (JMeter).

- Analyzed data from both Telegraf and JMeter is retrieved and stored in a dedicated time-series database (InfluxDB).

- Data is presented using a data visualization tool (Grafana and built-in JMeter reports)

When is the right time to implement an IoT testing framework?

“Of course, when the product is at the MVP stage, deploying a full-scale performance testing infrastructure is not something IoT providers should invest in. When the product is mature enough, a need to standardize the approach to testing appears.”

– Alexandra Zhyltsova, Business Analyst at Yalantis

“The optimal time to get started with performance testing is two to three months before the software goes to full-scale production (alpha). However, you should make it a part of your IoT development strategy and put it on your agenda when you’re approaching the development phase. At the same time, there is no good or bad time to implement a performance testing framework. You achieve different aims with it at different stages.”

– Artur Shevchenko, Head of QA Department at Yalantis

Below, we highlight some real cases where implementing a QA testing framework helped our clients prevent and solve various performance issues at different stages of the product life cycle.

Building a testing framework requires a specialized team.

We can provide the solution architects, DevOps, and QA engineers you need to succeed.

Testing IoT: use cases

For this section, we’ve selected stories of three different clients that worked with us on improving their IoT testing strategy. Let’s overview how implementing an IoT testing framework worked for them.

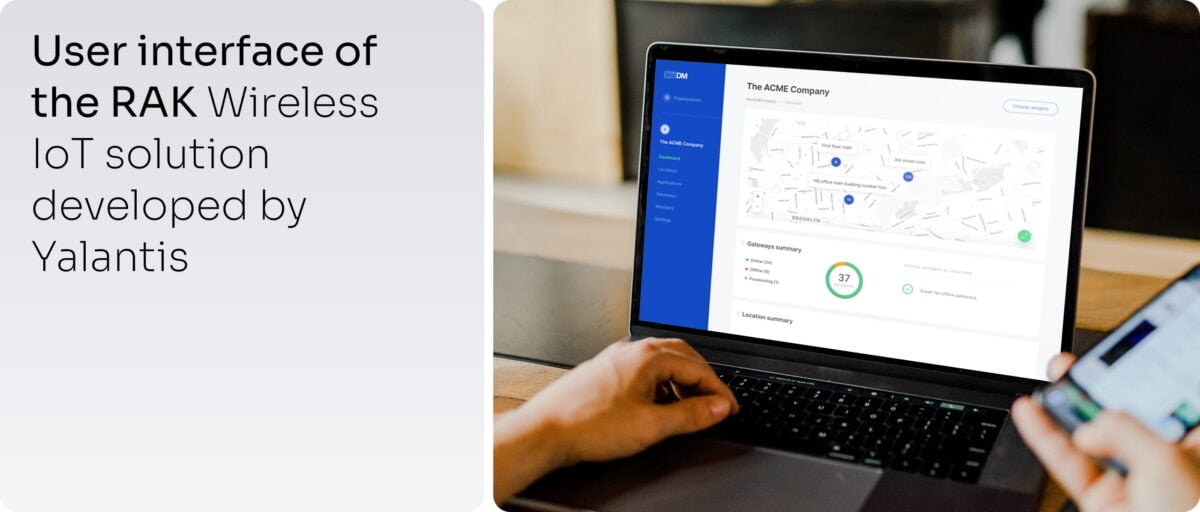

RAK Wireless

RAK Wireless is an enterprise company that provides a SaaS platform for remote fleet management. The product deals with the setup and management of large IoT networks, for which performance is crucial.

Initial context. We’ve been collaborating with RAK Wireless for two years. During this time, we’ve gradually moved from situational testing to implementing a complex performance testing approach.

When the product was in private beta, the number of system users was limited, and there was no need to get started with performance testing. In the course of development, moving to public beta and then to an alpha release, we focused on estimating the system load and understood that we were expecting an increase in the number of users and devices connected to the IoT network.

The solution’s main target customers are enterprises and managed service providers handling tens and hundreds of different IoT networks simultaneously. For such customers, performance is one of the key product requirements.

With more customers starting to use the product, the number of IoT devices was growing as well. To prevent any issues that could appear, the client considered changing the approach to IoT testing from ad hoc to a more complex one.

Challenges. The product handles the full IoT network setup process, from connecting end devices to onboarding gateways and monitoring full network operability.

- The first challenge was to carefully indicate the testing targets and plan test scenario coverage. We chose a combination of single-operation scenarios and complex usage flow scenarios to define the metrics to collect at each step.

- Once test coverage was planned, another challenge was to properly emulate a number of proprietary IoT devices of different types to make sure we were using optimal infrastructure resources for a given scenario. We ended up creating several different types of emulators. For some scenarios, a data-sending emulation was enough; others required emulating full device firmware to replicate entire virtual devices.

What we achieved with the performance testing framework. We aimed to stay ahead of the curve and detect performance issues before any product updates were released. Implementing the testing framework allowed the product team to take preventive actions and plan cloud infrastructure costs. Transparent reporting and optimal measurement points allowed us to quickly report detected issues to the proper engineering team in charge of testing firmware, cloud infrastructure, or web platform management.

Automating performance testing allowed us to achieve coherence and ensure regular compliance system checks. A long-term performance optimization strategy helped us achieve and preserve the desired response time, balance system load by verifying connectivity with device data endpoints, and ensure system reliability under normal and peak loads.

Need firmware that works reliably at scale?

Our custom firmware development services are designed for IoT systems where every device matters.

Toyota

Initial context. Our client is a member of Toyota Tsusho, a corporation focused on digital solutions development and a member of the Toyota Group.

One of the products we helped our client develop was an end-to-end B2B solution for fleet management. Vehicles were equipped with smart sensors that connected to a system to send telemetrics data using the TCP protocol.

Some of the features provided by the IoT solution included:

- Building and tracking routes for drivers

- Tracking vehicle exploitation details

- Providing real-time assistance to drivers along the route

- Providing ignition control

Challenges. Taking into account the scale of the business, the client needed to maintain a system that comprised about 1,000 end devices working flawlessly in real time.

Among the tasks we aimed to solve with the testing framework were:

- Ensuring the delay in system response to data received from devices didn’t exceed two seconds

- Preventing any breakdowns related to the performance of sensors tracking geolocation, vehicle mileage, and fuel consumption

- Establishing a reporting system that would allow for processing and storing up to 2.5 billion records over three months

What we achieved with the performance testing framework. We created a simulator to simultaneously run performance tests on the maximum number of end devices.

The framework included capacity testing and allowed results to be represented by providing useful reports that display a specified number of records.

Running volume tests helped us spot product infrastructure bottlenecks and suggest infrastructure improvements.

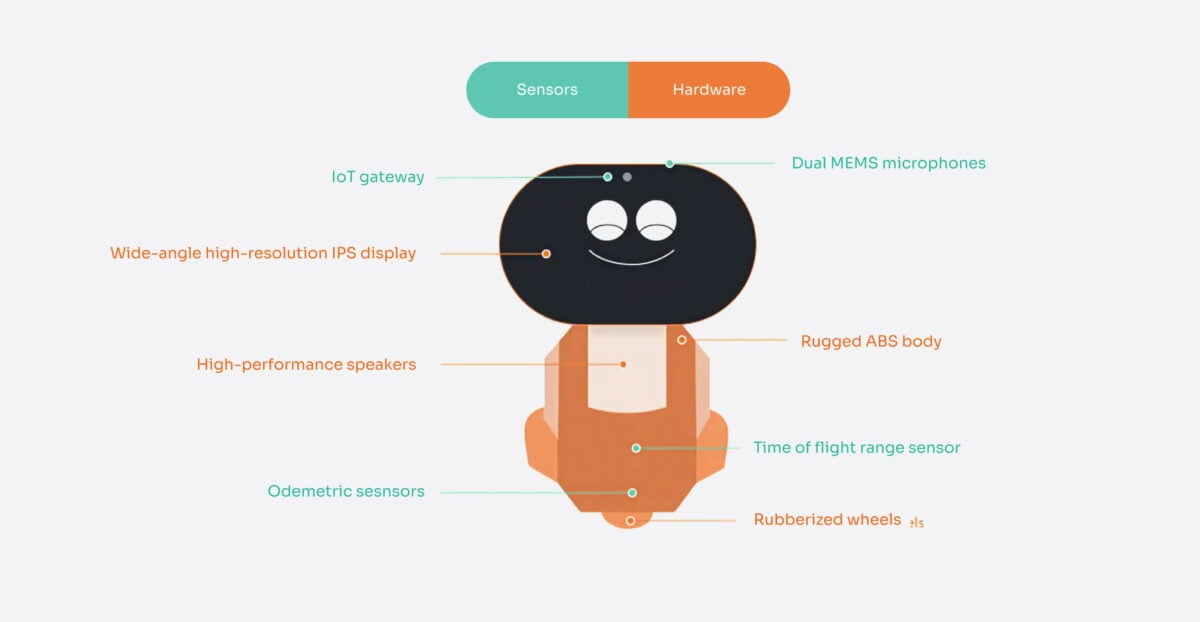

Miko 3

Initial context. Our client is a startup that produces hardware and software for kids, engaging them to learn and play with Miko, a custom AI-powered robot running on the Android operating system. We were dealing with the third version of the product, which quickly became extremely successful. The client was doing some manual and automated testing to detect and fix performance issues. But the lack of a systematic approach to quality assurance resulted in system breakdowns during periods of peak load.

Challenges. Issues with performance appeared after the company sold a number of robots during the winter holidays. When too many devices were connected to the network, customers started to report various issues that prevented their kids from playing with the robots. It became obvious that the system was not able to handle the load.

The client understood that they needed to adjust and expand their performance IoT software testing strategy, as the product was scaling and the load was only expected to grow further.

What we achieved with a performance testing framework. We aimed to establish a steady process of load testing for both the main use cases (such as voice recognition for Miko 3) and typical maintenance flows (such as over-the-air firmware updates).

Implementing the performance testing framework allowed us to build a system capable of collecting and analyzing detailed data about the system’s performance. Having this information, we managed to define the system’s limitations and detect bottlenecks in the architecture design as well as plan architectural optimizations.

Such an approach allowed us to systematically predict system load by measuring response times and throughput rates and analyzing error messages. A stable reporting process helped to distribute responsibility among the IoT security services testing team and optimize development costs.

How testing requirements differ by industry

The general principles of IoT testing hold across industries. But what you’re actually testing for, and what’s at stake when something slips through, varies a lot depending on where the product will be deployed. A missed edge case in a consumer gadget is a support ticket. The same missed edge case in a medical device or industrial controller is a different kind of problem.

Below is a closer look at how testing priorities take shape in three sectors where we see the most distinct requirements.

Healthcare IoT

Connected medical devices sit at a point where software quality and patient safety overlap directly. Whether it’s a wearable cardiac monitor, a connected infusion pump, or a diagnostic sensor feeding data into a clinical decision system, a software defect here isn’t just a product problem. It can have real consequences for the person using the device.

When a product qualifies as Software as a Medical Device (SaMD), it comes with a regulatory framework that shapes the entire testing process. Standards like IEC 62304 define how software lifecycle activities need to be documented. ISO 14971 sets out risk management requirements. And regulators (the FDA, the EMA, and their counterparts elsewhere) expect to see evidence of all of it in a form that holds up to scrutiny. This is why testing in this space can’t be designed purely around whether the software works. It also has to generate the right documentation as it goes.

Beyond the regulatory side, the practical testing priorities in healthcare IoT tend to center on a few core concerns:

- Data accuracy. Sensor readings that inform clinical decisions need to be validated carefully, not just under normal operating conditions but in the scenarios where accuracy is hardest to maintain, such as low battery, signal interference, or physical movement.

- Alert reliability. Real-time monitoring systems are only useful if their alerts are trustworthy. Testing needs to confirm that threshold triggers fire when they should, within the time bounds the clinical use case requires.

- Cybersecurity. Patient data is among the most sensitive there is, and medical devices are increasingly targeted. Penetration testing and encryption validation aren’t optional extras at this point. Regulators expect them.

- Interoperability with clinical systems. Most medical IoT devices don’t exist in isolation. They exchange data with hospital information systems and EHR platforms, usually via standards like HL7 FHIR. This integration layer deserves dedicated testing, because failures here often don’t look like failures until data ends up in the wrong place.

- Usability in real clinical conditions. Devices used by clinicians need to work correctly under the conditions those clinicians actually face. Time pressure, noisy electromagnetic environments, and protective equipment are all part of daily clinical reality. Lab-based usability testing often misses this.

Industrial IoT (IIoT)

Industrial environments introduce a set of constraints that software-focused QA teams sometimes underestimate. IoT devices deployed in a manufacturing plant, an energy facility, or a water treatment system interact with Operational Technology (PLCs, SCADA controllers, industrial networks) that has very different characteristics from typical IT infrastructure.

OT systems often run on proprietary protocols like Modbus or PROFINET. They’re designed around deterministic timing, where a response that arrives a few milliseconds late can be as bad as no response at all. And they typically can’t be taken offline for testing the way a web service can. A firmware update process that works fine on a test bench may create real problems when deployed against a live production line. Getting testing right in this environment requires dedicated infrastructure that reflects the actual OT context, not just a generic test environment.

The testing areas that matter most in industrial deployments are:

- Functional safety. Safety instrumented functions need to perform correctly under all expected conditions, including failure modes. This is formalized in standards like IEC 61508, and verification evidence is typically required.

- ICS and SCADA security. Industrial control systems are targets for ransomware groups and more sophisticated actors alike. Security testing needs to cover how the system responds to unexpected or malicious commands, not just whether it works correctly under normal inputs.

- OT protocol handling. IoT gateways that bridge IT and OT networks need to be tested for correctness at the protocol level. Data loss or mistranslation here can have downstream consequences that are hard to trace.

- Resilience under failure. Industrial systems are expected to continue operating, or fail in a safe and predictable way, when components drop out. Sensor dropout, network partition, and power interruption scenarios should all be part of the test plan.

- Environmental conditions. Industrial devices are deployed in environments that are nothing like a lab. Testing under temperature extremes, vibration, dust, and electromagnetic interference isn’t optional. It’s part of qualifying a device for deployment.

Logistics and supply chain IoT

Logistics IoT systems tend to involve large numbers of devices spread across wide geographic areas, operating in conditions where network connectivity is unreliable and the cost of silent failure is high. A temperature sensor in a pharmaceutical cold chain that stops reporting doesn’t announce itself. The problem surfaces later, when the data gap is discovered or when product integrity is called into question.

The testing priorities in this sector reflect those constraints:

- Cold chain integrity. Temperature and humidity sensors need accuracy validation across the full range they’ll encounter in the field. Equally important is testing what happens at the data level when a handoff occurs between sensor, gateway, and cloud, because this is where records are most commonly lost or duplicated.

- Behavior during connectivity loss. Devices in transit will lose signal. The question is whether they handle it gracefully, buffering data locally and then syncing cleanly when connectivity returns without creating gaps or duplicates in the record.

- Scale testing. A system that performs well with a few hundred devices may degrade in unexpected ways at a few thousand. Load testing at a scale that reflects actual fleet size is the only reliable way to understand where the ceiling is.

- Location accuracy in difficult environments. GPS performance in urban areas, tunnels, and high-interference zones is genuinely different from open-road conditions. For any application where location data drives decisions, this needs to be tested in the environments where the device will actually operate.

- Integration with backend systems. Logistics IoT platforms typically feed data into ERP and warehouse management systems. End-to-end testing of these integrations, covering data transformation, timing, and error handling, tends to surface issues that component-level testing misses.

Testing priorities at a glance

|

Functional testing |

Critical |

Critical |

Critical |

|

Security / pen testing |

Mandatory |

Mandatory |

Recommended |

|

Compliance / regulatory |

FDA, IEC 62304 |

IEC 62443, 61508 |

GDP, GS1, GDPR |

|

Performance / load |

Required |

Required |

Required |

|

Interoperability |

HL7 FHIR, EHR |

OPC-UA, Modbus |

ERP, WMS, TMS |

|

Usability testing |

Clinical staff |

Operators |

Field workers |

|

Environmental testing |

Recommended |

Mandatory |

Recommended |

|

Data integrity |

Mandatory |

Critical |

Cold chain focus |

Wrapping up

IoT testing has grown well beyond performance benchmarks and automation scripts. As the systems themselves become more complex, the scope of what good QA actually means has expanded to match: security vulnerabilities that can cause physical harm, compliance obligations that can block a product from reaching market, and industry-specific constraints that no generic testing approach can fully address.

The cases we’ve worked on, from RAK Wireless to Toyota to Miko 3, reflect this reality. The teams that avoided costly failures weren’t just running more tests. They were testing the right things, at the right time, with a clear understanding of what was actually at stake.

At Yalantis, our IoT testing services are built around that principle. We don’t just validate software. We work across the full stack: firmware, hardware interfaces, communication protocols, cloud infrastructure, and the regulatory requirements that govern how all of it needs to behave. That’s what it takes to ship a connected product with confidence.

If you’re building an IoT system and want to know where your real exposure sits, that’s a conversation worth having early.

Want to approach IoT performance testing in a holistic way?

We’ll show you how

FAQ

What is IoT testing and why does it matter?

Internet of Things testing checks if connected devices work well together, stay reliable under pressure, and keep data secure. It’s key to making sure IoT systems deliver what users expect without glitches.

What is the main challenge of IoT device testing?

The main challenge of testing IoT devices and gateways is their diversity. For the majority of reasons, IoT QA engineers would need to emulate them. This takes time and requires the involvement of a highly skilled IoT development team.

What is the most cumbersome part of IoT testing and what corresponding measures to take?

The most cumbersome part of Internet of Things testing is related to testing edge devices and gateways. In fact, for testing edge devices and IoT gateways, QA engineers perform the same test types they would do for traditional software testing. To test the network level (gateways) you would usually need functional, security, performance, and connectivity tests. To perform QA of edge devices, functional, security, and usability tests are required. Additionally, there is a need to run compatibility tests.

What is an Internet of Things testing framework?

An Internet of Things testing framework is a structure designed for testing Internet of Things systems using various tools, scripts, scenarios, rules, and templates to ensure overall system quality.

How is testing for IoT applications different from regular app testing?

With IoT you are not just testing software. You are also dealing with hardware, networks, and large volumes of real-time data. That makes IoT app testing more complex and demanding.

What kind of quality assurance solutions for IoT devices are available?

IoT QA testing ranges from functional testing to stress and load testing. It’s about making sure your device works smoothly, even at scale or under tough conditions.

Why is IoT software testing important?

Internet of things software testing helps make sure everything from your devices to your apps works together smoothly. It’s a key step for catching bugs early, improving performance, and preventing bigger issues after launch